Global corporations have poured billions of dollars into generative artificial intelligence suites with the expectation of immediate efficiency gains, yet the reality remains a landscape cluttered with underutilized licenses and frustrated workforces. The market saturation of generative software through integrated software-as-a-service ecosystems has made access nearly universal, but accessibility does not equate to efficacy. While the technical capability of these models has reached an unprecedented peak, the organizational AI Quotient, or AIQ, has largely stagnated. Major software vendors have successfully embedded these tools into daily work environments, yet the gap between having a feature and knowing how to extract value from it continues to widen across global industries.

Distinguishing between technical implementation and actual workforce integration is the defining challenge for modern leadership. Many organizations treated the rollout as a simple software update rather than a fundamental shift in cognitive labor. Consequently, the presence of an AI assistant in a sidebar often serves as a digital ornament rather than a functional teammate, highlighting a failure to address the human side of the equation. This disparity between technological potential and organizational readiness suggests that the bottleneck is no longer the code, but the culture of the workplace itself.

Tracking the Disconnect Between Investment and Measurable Output

Shifting From Technical Deployment to Human Adoption Hurdles

The transition from basic tool procurement to the complex reality of behavioral change represents a significant friction point in the corporate world. Employees often find themselves caught in a cycle where reverting to manual workflows feels safer and more predictable than experimenting with a non-deterministic tool. This behavior suggests that tools are being forced into old workflows rather than workflows being redesigned to accommodate the new technology. When the learning curve is perceived as a barrier to completing daily tasks, the path of least resistance leads workers back to traditional methods, leaving expensive tools idle.

Moreover, emerging opportunities in AI literacy are becoming the primary differentiator for competitive advantage in this environment. Companies that prioritize teaching staff how to conceptually frame problems for AI assistance are seeing a higher rate of sustained usage compared to those that merely provide login credentials. This shift moves the focus from technical availability to the cultivation of a specific mindset that views machine intelligence as a collaborative partner. Addressing these behavioral hurdles is the only way to ensure that the technological revolution actually translates into a professional one.

Quantifying the Financial Impact of Underutilized AI Licenses

Market data reveals a staggering $80.6$ million average annual loss per large organization due to unused or underutilized AI software licenses. This financial hemorrhage is a direct byproduct of the productivity paradox, where the initial investment fails to translate into measurable output gains. When seat counts rise without a corresponding increase in user engagement, the return on investment becomes impossible to justify to stakeholders over the long term. This waste highlights a critical oversight in procurement strategies that favor scale over strategic integration.

Projecting this impact into the coming years suggests that the cost of neglect will only grow as license fees increase. Performance indicators are shifting away from simple adoption metrics toward depth of engagement and task completion rates. Success in the current landscape is increasingly defined by how effectively an organization can convert its massive software expenditures into tangible, time-saving outcomes that justify the high cost of entry. Without a change in how usage is tracked, the financial gap will continue to undermine the broader goals of enterprise expansion.

The Productivity Paradox: Identifying the Obstacles to ROI

The primary cause of tool rejection is the abandonment cycle, which is frequently triggered by the friction of prompt engineering. When a user fails to receive a high-quality result within the first two attempts, the psychological cost of continuing the effort often outweighs the perceived benefit. This trial-and-error plateau prevents staff from reaching a level of proficiency where the tool becomes truly invisible and integrated into their creative or analytical processes. For many, the tool remains a source of work rather than a way to reduce it.

Adding to this friction is the rise of AI slop, characterized by a flood of low-quality, automated content that increases information overload without adding value. This phenomenon creates a secondary productivity drain, as employees must spend additional time filtering through generic, AI-generated communications. Furthermore, a significant lack of formal instruction for non-technical staff leaves workers to navigate these complexities alone, which only deepens the reliance on ineffective guesswork. Strategies for success must involve social and peer-based learning to bypass these initial hurdles and foster meaningful engagement.

Navigating the Ethical and Governance Frameworks of Generative AI

Understanding the impact of data privacy regulations is crucial for managing how employees interact with enterprise large language models. Strict compliance standards often limit the types of data that can be processed, which in turn restricts the utility of the technology for specific, high-value tasks. This tension between security and utility frequently leads to the emergence of shadow AI, where staff use unauthorized external tools to bypass corporate restrictions, creating significant risks for data leakage.

Establishing clear security standards ensures that AI-generated outputs meet organizational quality and accuracy benchmarks before they reach the final consumer. As global standards evolve, they directly influence corporate training and implementation policies, forcing a constant recalibration of governance frameworks. Maintaining this balance requires a transparent approach that protects sensitive information while still allowing for the level of experimentation necessary to derive value from the tools. Compliance must be seen as an enabler of quality rather than just a restrictive barrier.

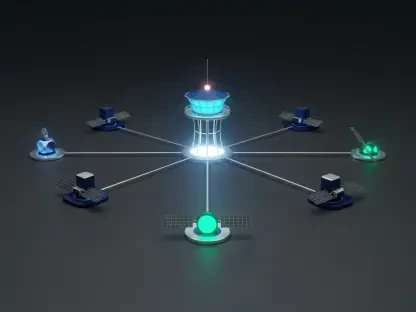

Redefining the Human-Machine Partnership for Long-Term Gains

A shift toward a human-centric strategy is essential to ensure psychological safety and long-term user experience gains. Employees must feel that the technology is designed to augment their abilities rather than replace their positions. By prioritizing the user experience, organizations can reduce the anxiety associated with automation and foster a more open environment for creative problem-solving. This approach transforms the perception of technology from a threat into a supportive infrastructure that empowers the workforce.

The evolution from simple text generators toward autonomous agents represents the next major market disruption. These agents will likely handle more complex, multi-step workflows, fundamentally changing job roles across various business segments. Preparing for this future requires moving beyond static training modules toward continuous, social learning environments. In such a landscape, AI literacy becomes a foundational requirement for every professional, regardless of their specific technical background. The future of work depends on the ability of humans and machines to navigate increasingly complex tasks in tandem.

Bridging the Gap Between AI Potential and Practical Performance

Leadership recognized that massive investments had not yet yielded the significant productivity gains that were initially forecasted during the early stages of the rollout. It became evident that prioritizing software acquisition over human development led to a stagnation of value. The path forward demanded a cultural transformation where experimentation was encouraged and where the focus shifted from generating raw content to producing high-value, refined outputs. This realization forced a pivot toward quality over quantity in every digital interaction.

Organizations that succeeded were those that moved away from the assumption of intuitive mastery and instead built robust frameworks for AI literacy. They fostered peer-based learning networks that allowed workers to share successful strategies and overcome the common pitfalls of the abandonment cycle. By investing in the human element, these companies transformed their digital tools from a cost center into a strategic engine of growth. The resulting shift effectively ended the productivity paradox by prioritizing the development of the workforce alongside the deployment of the machine.