Breaches do not wait for maintenance windows, and neither do floods of bot traffic that melt autoscaling budgets before teams can react, so any platform claiming resilience must prove it under the same pressure that knocks real systems sideways.

Market Context and Definition

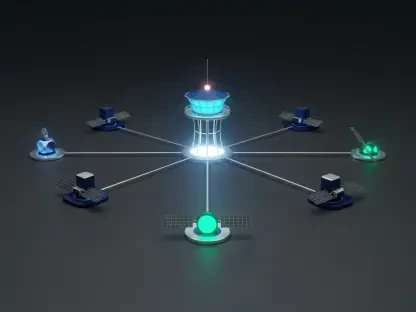

A unified cyber resilience platform brings performance testing, vulnerability discovery, and exposure visibility into a single, orchestrated workflow. Overload, operated by 78 OVER 37 LIMITED in the UK, attempts exactly that by combining advanced load and stress testing, an enhanced penetration testing suite, and a continuous exposure monitor called Leak Check. Rather than handing teams disjointed tools, it links them through shared telemetry, automation, and role-aware controls.

This shift mirrors broader industry movement from reactive protection to preparation. Cloudflare’s reporting on record DDoS volumes underscored how little time defenders have to diagnose saturation, misconfigurations, or brittle failover paths while traffic spikes. In response, buyers now ask not only “Is it secure?” but “Will it stay fast, usable, and provably resilient when stressed?” Overload’s bet is that a converged design can answer both.

Deep-Dive Analysis

Load and Stress Testing

Overload’s load engine generates realistic user journeys and attack-like surges across web apps, APIs, and edge paths. The emphasis is fidelity: shaping concurrency, payload mix, and geographic spread so that autoscaling, caches, and CDNs are exercised the way production traffic does. This matters because synthetic tests that ignore routing and middleware create false confidence.

Performance metrics are tied to operational questions. Throughput, p95/p99 latency, and error rates reveal saturation points; autoscaling and failover behavior show how capacity reacts, not just whether it exists. The platform correlates test phases with infra telemetry to pinpoint bottlenecks—hot shards, noisy neighbors, cold caches—shortening time from symptom to fix.

Penetration Testing Suite

The pentest module runs controlled, authenticated attacks against web surfaces, APIs, and system configurations. Scope controls, safe exploitation bounds, and full audit trails keep testing lawful and repeatable. What differentiates it is coupling findings to developer workflows: issues open directly in ticketing systems, evidence packs accompany each item, and verification tests re-run after fixes merge via CI/CD.

By simulating modern kill chains—token misuse, deserialization flaws, broken object-level authorization—the suite avoids checklist theater. It surfaces exploitability with context: affected assets, business impact, and the conditions under which the issue becomes practical. That framing turns “critical” from a label into an argument.

Leak Check and External Exposure

Leak Check scans public and underground sources for stolen credentials and sensitive artifacts, then applies validation logic—format checks, domain affinity, and limited active probes—to reduce noise. Signals feed risk scores that prioritize human attention: widely reused admin passwords escalate; stale test accounts de-escalate.

The unique twist is how these external signals trigger internal action. Discovered leaks can launch targeted pentests against implicated apps or kick off production-safe load bursts to assess blast radius if a token were abused at scale. That linkage converts threat intelligence into system validation, not just alerts.

Orchestration, Data, and Integration

A central orchestration layer sequences tests, correlates results, and runs response playbooks. Normalized schemas allow cross-domain dashboards: see where DDoS tolerances, auth misconfigurations, and leaked credentials overlap on the same services. An API-first design, RBAC, and compliance logging support SIEM, SOAR, ITSM, and cloud integrations, so evidence flows to the places audits and responders live.

This architecture matters more than any single feature. In competing tools, teams spend effort stitching CSVs and reconciling IDs. Overload’s shared identifiers across test artifacts, assets, and findings make trend analysis feasible—resilience becomes a metric with baselines, deltas, and service ownership.

Performance, Operations, and Differentiation

On performance, Overload’s realism stands out. Traffic models emulate burstiness and cache-warmup effects that often hide until go-live. Operationally, embedded guardrails—rate governors, kill switches, maintenance windows—allow safe testing on shared platforms. Competitors offer either load or security depth; few wire leak intelligence into test orchestration with this degree of coupling.

However, trade-offs exist. Realistic generation at scale demands careful network egress management and may require dedicated test regions to avoid noisy interference with third parties. Leak validation balances speed with ethics; overzealous probing risks policy breaches, while overly cautious checks increase false negatives. Success hinges on integration quality: if asset inventories or identity sources are incomplete, correlation weakens.

Use Cases and Measured Impact

E-commerce teams can rehearse peak-season surges, validate bot mitigations, and confirm failover while monitoring checkout latency under stress. SaaS providers gain API abuse testing tied to service mesh routing, closing gaps between gateway policies and app logic. Financial firms benefit from Leak Check’s credential rotation workflow and evidence packs that map directly to regulatory reporting. Media platforms baseline CDN behavior and run “chaos-lite” failover drills at the edge. Public sector programs conduct strictly authorized scenarios within hard scopes, with continuous exposure watch for sensitive datasets.

What these have in common is a move from “Isolated fix” to “Closed loop.” Findings spawn work, work is verified by test, verification updates baselines, and baselines inform risk posture. That loop, not a dashboard screenshot, is the product.

Verdict and Next Steps

This review found that Overload delivered a credible, integrated take on resilience by binding load realism, ethical pentesting, and leak-driven signals into one workflow. The platform excelled where correlation mattered—tying external exposure to internal weakness and operational headroom. It also revealed dependencies: clean asset inventories, disciplined RBAC, and thoughtful test windows were prerequisites for consistent value.

For teams evaluating options, the pragmatic path looked clear: start with a narrow, high-value service; enable Leak Check on identities tied to that surface; run scoped pentests to harden logic; then schedule progressive load waves to verify resilience under adversarial patterns. As coverage expanded to microservices, service meshes, and edge compute, Overload’s orchestration and shared data layer became the leverage point. The net verdict favored organizations ready to operationalize evidence—not just collect it—while acknowledging that integration depth and data quality would ultimately decide outcomes.