Vijay Raina is a seasoned expert in enterprise SaaS and software architecture, with a deep focus on how modern businesses leverage automation to scale operations. In this discussion, he explores the transformative shift of productivity platforms into sophisticated orchestration layers that house both human and AI collaboration. We dive into the technical mechanics of secure cloud sandboxes, the integration of emerging standards like the Model Context Protocol, and the strategic move from basic chatbots to autonomous agents that handle complex, multi-step workflows across various enterprise data sources.

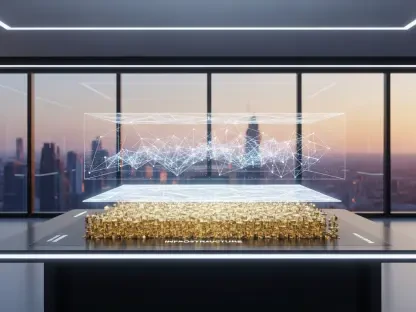

Collaborative tools are evolving into orchestration layers that coordinate work across multiple software platforms. How does this shift from a simple application to “core infrastructure” change how teams manage daily operations, and what technical hurdles do organizations face when consolidating disparate data sources into one hub?

The transition from a simple note-taking application to an orchestration layer represents a fundamental change in how we perceive productivity software. When a platform becomes core infrastructure, it moves from being a passive repository of information to an active participant in daily operations that can pull in data from any database. In the past, teams struggled with fragmented data silos, where status updates and project milestones lived in separate, disconnected apps. Now, with the ability to build over one million agents as some users have already done, the hurdle of manual synchronization is being replaced by automated workflows. The primary technical challenge remains the secure consolidation of these disparate sources, as organizations must ensure that bringing external data into a central hub doesn’t create new vulnerabilities or data integrity issues.

The ability to run custom code in a secure cloud sandbox allows teams to trigger actions via webhooks without external hosting. What specific logic-heavy workflows are now possible with this setup, and how should developers approach the security implications of deploying code directly within a shared productivity workspace?

The introduction of Workers, which provides a cloud-based environment for running custom code, is a game-changer for developers who previously had to manage their own external infrastructure. This setup allows for sophisticated, logic-heavy workflows such as triggering specific actions via webhooks when a database entry is updated or a project stage changes. Developers can now write custom logic to handle complex data transformations or unique business rules directly within a secure sandbox that isolates the code from the rest of the system. This isolation is crucial for security, as it ensures that custom scripts can’t inadvertently interfere with the broader workspace or compromise sensitive information. By using the same credit system as custom agents—and even making it free through August for experimentation—platforms are encouraging a “code-first” approach to productivity that was previously too cumbersome for many teams to maintain.

Keeping data current across platforms like Salesforce, Zendesk, and internal Postgres databases often requires complex third-party scripts. How does native database syncing simplify this maintenance, and what are the primary benefits of using a unified workspace as a “canvas” for real-time data visualization and agentic work?

Native database syncing eliminates the need for brittle, third-party automation scripts that often break or require constant updates to keep up with API changes. By pulling in real-time data from heavyweights like Salesforce, Zendesk, and Postgres, a workspace becomes a “sheer canvas” where agents and human team members can collaborate on live information. This unified environment allows for more accurate agentic work, as the AI teammates are making decisions based on the most current data available rather than stale exports. The primary benefit is a reduction in operational overhead; instead of managing a dozen different integrations, developers can focus on building the actual logic that drives the business forward. It effectively turns the workspace into a programmable engine where the data is always live and the workflows are always synchronized.

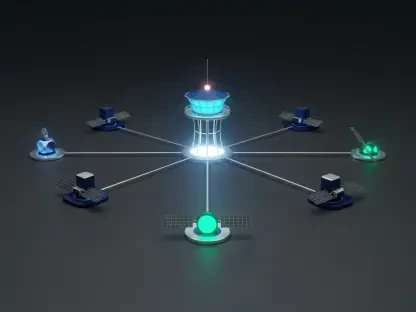

Emerging standards like the Model Context Protocol (MCP) allow internal workspaces to communicate with external agents like Claude Code or Cursor. How do these integrations change the way developers assign and track tasks, and what is the process for ensuring external AI tools interact correctly with internal data?

The adoption of the Model Context Protocol (MCP) marks a significant step toward an open ecosystem where different AI tools can speak the same language. This allows developers to chat directly with external agents like Claude Code, Cursor, Codex, or Decagon and assign them tasks as if they were internal team members. You can now track the progress of these external agents within your own workspace, which provides a level of visibility that was previously impossible when using standalone AI coding tools. To ensure these tools interact correctly with internal data, developers use specialized interfaces like a Command Line Interface (CLI) available on high-level business and enterprise plans. This gives technical teams the granular control they need to manage how these external “brains” access and modify internal information while maintaining strict oversight of the work being performed.

Modern businesses are moving past basic chatbots toward agentic tools that perform multi-step workflows. What criteria should a company use to decide between building a custom internal agent via an API versus using a pre-configured partner tool, and what metrics best define the success of these automated agents?

Deciding whether to build a custom internal agent via an External Agent API or use a pre-configured partner tool like Decagon depends largely on the specificity of the company’s internal logic and the sensitivity of their data. If a team has highly proprietary workflows that require deep integration with unique internal systems, building a custom agent is usually the better path for long-term flexibility. However, partner tools are excellent for standard operations like customer support or general project management where the setup speed is a priority. Success should be measured by the reduction in repetitive tasks—such as how many status updates are compiled automatically or how many FAQs are handled without human intervention. Ultimately, the goal is to see a measurable increase in “multi-step” completions, where an agent takes a project from an initial trigger all the way to a finished output across multiple tools.

What is your forecast for AI agents in the workspace?

My forecast is that we are rapidly approaching a “zero-interface” era where the majority of administrative and data-management tasks happen invisibly in the background. In the next few years, the distinction between a productivity app and an operating system will blur, as agents move from being simple helpers to becoming the primary coordinators of enterprise data. We will see a shift where “Any data, any tool, any agent” becomes the standard architecture for every modern business, regardless of size. Organizations that embrace this programmable infrastructure today will have a massive competitive advantage, as they will be able to scale their operations without a linear increase in human headcount or administrative complexity.