The golden age of software, where profit margins hovered near ninety percent and scale felt nearly infinite, is currently facing a reckoning as the hidden costs of intelligence begin to surface. For decades, the cloud computing model followed a predictable path of code-based growth, where the marginal cost of serving an additional customer was almost zero. Today, the landscape has shifted toward a compute-heavy paradigm where every query, generation, and automated action carries a literal price tag. This transformation is not merely a technical update but a fundamental rewiring of the unit economics that once made Software-as-a-Service the most profitable sector in the global economy.

The current state of the industry reveals a stark divide between legacy providers struggling to adapt and nimble players built on neural architectures. Technological influences are no longer limited to the efficiency of the code itself but are increasingly tied to the efficiency of the underlying chips and the models they run. While established players still dominate market share, their financial structures are being tested by the sheer energy and processing power required to sustain modern digital services. Regulation is also tightening, particularly regarding how data is used to fuel these expensive models, creating a high-stakes environment where compliance and cost-management are now inseparable.

The Death of Fixed-Cost Software and the Rise of Generative Pricing

Strategic Shifts in Modern Software Delivery

Modern software delivery is moving away from the “build once, run everywhere” mantra toward a model of continuous, high-cost inference. This shift is driven by a change in consumer behavior; users no longer want static tools but rather dynamic partners that can reason and execute tasks autonomously. This emerging expectation has forced software providers to integrate massive computational layers into their products, turning what was once a fixed-cost asset into a variable-cost liability. Consequently, the industry is seeing a move toward hybrid delivery systems where basic tasks remain on traditional servers while complex reasoning is offloaded to expensive specialized clusters.

This evolution presents a massive opportunity for those who can optimize the delivery of these services, yet it simultaneously threatens the dominance of those who cannot move beyond the flat-fee subscription. We are witnessing the birth of generative pricing, where the cost of the software is directly tied to the complexity of the intelligence it provides. This is not just about charging more; it is about surviving in a world where the cost of goods sold is no longer a rounding error. Market drivers are now focused on efficiency at the inference layer, pushing developers to seek out new ways to compress models without sacrificing the quality of the output.

Quantifying the Margin Compression and Financial Performance

The financial reality of this new era is reflected in the visible erosion of gross margins across the sector. Recent performance indicators show that software companies heavily invested in autonomous features are seeing their historic margins contract by as much as twenty percent. As the “token tax” accumulates with every user interaction, the profit per user is being squeezed between rising compute costs and the competitive pressure to keep subscription prices stable. This compression is a structural shift, not a temporary dip, as the infrastructure required to run these sophisticated systems remains priced at a premium.

Looking forward, growth projections suggest that while revenue may continue to climb, the path to profitability will be significantly steeper. Forecasts for the period from 2026 to 2028 indicate that the most successful firms will be those that successfully transition their customer bases to consumption-based models. Market data points toward a future where “credits” or “compute units” become the primary currency of software procurement. Companies that fail to make this transition risk being trapped in a legacy financial model that cannot support the weight of the very intelligence they are trying to sell.

The High Cost of Intelligence: Technical and Operational Obstacles

Overcoming the technical debt associated with high-cost compute requires a total reimagining of the software stack. One of the primary obstacles is the inherent latency and expense of calling large-scale models for every minor user action. To combat this, developers are increasingly turning to “slimmer” models and edge computing to handle routine tasks, reserving high-powered compute for the most demanding requests. This tiered approach to intelligence helps manage the operational burden, but it also adds a layer of complexity to the development cycle that didn’t exist in the era of simple web applications.

Market-driven challenges are further exacerbated by the scarcity of specialized hardware, which keeps the price of tokens high. Strategies to overcome this often involve long-term commitments to cloud providers or the development of proprietary, specialized hardware accelerators. There is also a growing talent gap, as the skills required to optimize a model for cost are vastly different from those required to build a feature for a user. The firms that manage to bridge this gap will find themselves with a significant competitive advantage in a market that is increasingly sensitive to the bottom line.

Governing the Algorithmic Economy: Data Security and AI Compliance

The regulatory landscape is becoming increasingly complex as governments seek to keep pace with the rapid deployment of autonomous systems. Significant laws regarding data residency and model transparency are forcing companies to reconsider how and where they process information. Compliance is no longer just a legal hurdle; it is a significant cost center that affects everything from data storage to the fine-tuning of models. Security measures must now account for “prompt injection” and other vulnerabilities that were non-existent in the traditional software world, adding yet another layer of expense to the product lifecycle.

These standards are also impacting industry practices by requiring more rigorous auditing of the data sets used to train and run these systems. As security and privacy become top-tier priorities, the industry is seeing a shift toward decentralized processing and localized data silos. While these measures protect the user, they often increase the operational cost and decrease the efficiency of the software. Navigating this environment requires a delicate balance between pushing the boundaries of what software can do and ensuring that it remains within the evolving boundaries of international law.

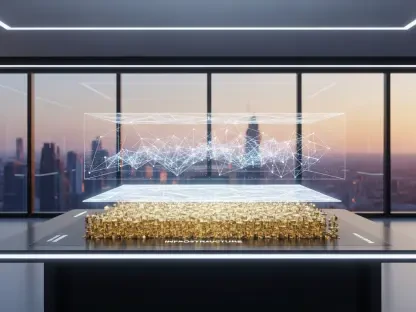

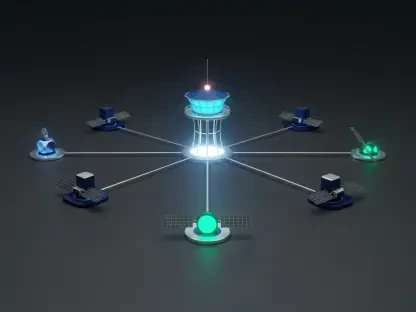

The Future Landscape: Autonomous Agents and the Infrastructure Dominance

The next phase of the industry will likely be defined by the dominance of autonomous agents that can navigate complex workflows without human intervention. These agents represent a massive leap in value but also a massive leap in compute consumption. As software shifts from being a tool that humans use to an agent that acts on behalf of humans, the relationship between the user and the software will change entirely. This will likely lead to a market disruptor where infrastructure providers—those who own the chips and the data centers—gain more leverage over the application layer.

Innovation in this space will be driven by the need to make these agents more efficient and less reliant on massive centralized clusters. Potential growth areas include specialized AI-native hardware for the home and office, as well as new protocols for agent-to-agent communication. Global economic conditions will play a role, as the high cost of energy and raw materials for chip manufacturing could either accelerate or slow down the adoption of these technologies. The infrastructure layer is becoming the new “operating system,” and those who control it will dictate the pace of innovation for everyone else.

Redefining Value in the Post-Subscription Era

The findings of this report suggested that the era of nearly infinite margins has been replaced by a more disciplined financial reality. The industry moved away from the simple metrics of the past, as executives and investors began to prioritize efficiency over raw user growth. It became clear that the value of software was no longer found in the code itself but in the specific business outcomes it could generate through intelligent automation. The successful firms were those that recognized this shift early and built their financial models around the reality of the token tax rather than ignoring it.

Strategic investments in proprietary model optimization and tiered compute architectures became the new standard for maintaining a healthy bottom line. Organizations that treated compute as a precious resource, rather than an unlimited utility, were able to sustain their innovation cycles without sacrificing their financial stability. The shift toward outcome-based billing provided a new path forward, allowing providers to align their success directly with the success of their customers. This period of transition ultimately paved the way for a more mature, resilient software economy that valued precision and performance over the sheer scale of the previous decade.