The investment landscape for software-as-a-service has undergone a seismic shift, moving from a period of “fortress” valuations to what many are calling a “SaaSpocalypse.” As artificial intelligence moves from a supportive tool to an autonomous agent capable of writing its own code, investors are questioning whether the high margins and predictable revenues of the past decade can survive. To navigate this volatility, we are joined by Vijay Raina, a seasoned specialist in enterprise technology and software architecture. With his deep background in identifying the structural integrity of digital platforms, Vijay provides a unique perspective on why the market’s current panic might be overlooking the enduring power of “systems of record” and the massive data moats held by established players. In this discussion, we explore the nuances of the AI revolution, from the rise of natural language programming to the strategic necessity of usage-based pricing models in an era of shrinking headcounts.

AI tools now enable “vibe coding,” allowing users to describe software in plain English to generate functional programs. How does this zero-barrier entry impact traditional software margins, and what specific steps must a company take to ensure its product remains a proprietary asset rather than a replaceable commodity?

The arrival of “vibe coding” via tools like Anthropic’s Cowork has genuinely rattled the market because it challenges the long-held belief that the difficulty of writing code was a primary barrier to entry. When an entrepreneur can simply speak a project-management tool into existence, the high profit margins of traditional SaaS firms suddenly look like a massive target for disruption. We are seeing a shift where white-collar processes, like auditing or processing invoices, are becoming commoditized because the software helping them is now vulnerable to cheaper, faster AI alternatives. To remain proprietary, a company must move beyond being a simple tool and become a “system of record”—the single, trusted source of truth for a business’s most sensitive data. This involves moving away from just offering features and instead focusing on governance, audit trails, and legal protections that a “vibe-coded” prototype simply cannot replicate. For a company to survive this transition, it needs to ensure its software is built into the very rules of the business, making the risk of switching to an unverified AI tool feel catastrophic rather than cost-effective.

Large firms have recently implemented massive staff reductions as AI tools increase individual worker productivity. Given that many software providers charge on a per-seat basis, how can these companies successfully transition to usage-based or outcome-based pricing models while maintaining their current revenue streams?

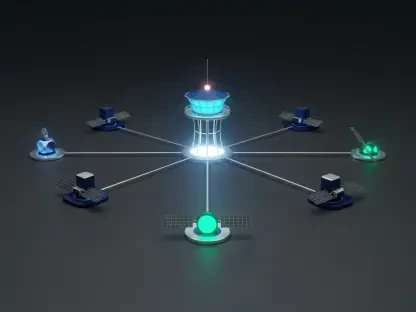

The trend toward radical efficiency is already visible, with firms like Block cutting their staff from 10,000 to fewer than 6,000 employees because intelligence tools have fundamentally changed what it means to run a company. This creates a direct threat to SaaS providers like Salesforce or Adobe that rely on per-user licensing; if a company becomes twice as productive with half the people, the software provider potentially loses 50% of its revenue overnight. To counter this, winners in the space must pivot to pricing models that capture the value of the outcome—such as the number of invoices processed or the success of a marketing campaign—rather than the number of humans logged in. We are seeing this evolution with products like Agentforce, where the software acts as an autonomous agent rather than a manual tool for an individual. The transition is difficult and requires a deep integration into the enterprise workflow, but by becoming a “mission-critical” infrastructure that drives specific business results, firms can protect their income even as the number of traditional “seats” in an office declines.

There is a vital distinction between simple software tools and “systems of record” like accounting or payroll infrastructure that hold sensitive data. What are the catastrophic risks of replacing established infrastructure with AI-generated alternatives, and why is a third-party guarantee often more valuable than cost savings?

For a business using a platform like Sage, the software cost is often a tiny fraction of their total expenses, but their entire internal architecture is built around it. If a company attempts to replace this with a “vibe-coded” alternative and that AI-generated tool makes a single error in tax compliance or payroll, the consequences are not just financial—they are potentially legal and existential. Software testing has historically been a much longer and more critical process than the actual creation of the code, and AI-built prototypes often fall into the “maintenance trap” where they cannot easily be updated to meet changing laws or security threats. Most serious businesses would rather pay for a third-party guarantee, knowing there is a human to call and a verified system of governance when things go wrong, rather than saving a few dollars on an unmanaged tool. This is why “systems of record” are so resilient; they provide a clean, verified environment for data that prevents the chaos of unmanaged data silos, making them an essential infrastructure that most businesses simply cannot live without.

Companies like Experian and Relx rely on human-verified, private datasets to provide legal and financial accuracy. In an era where AI-generated misinformation is rising, how can firms leverage these “data moats” to protect their market share, and what metrics demonstrate their superiority over general AI models?

In a world increasingly flooded with AI-generated misinformation and fake identities, the value of verified, private data has never been higher. Experian, for instance, manages a staggering 1.3 billion data updates every month, providing a verified credit history that acts as a vital filter for the global banking system. This massive dataset is something that general AI models cannot train on because it sits behind private firewalls and requires expert verification. We can see the strength of this “data moat” in Experian’s performance, with organic revenue growth accelerating to 8% in late 2025 as banks sought out accuracy in their lending decisions. Similarly, firms like Relx and Wolters Kluwer use experts to check their medical and tax insights, ensuring their data can be defended in court or used in life-and-death hospital settings. The superiority of these models is demonstrated by their high customer retention rates and the fact that their tools, like Wolters Kluwer’s UpToDate platform, are directly linked to improved patient outcomes, justifying a price premium that general AI tools simply cannot command.

Market volatility has led some consolidators to buy up niche software businesses that manage specialized tasks like hospital billing or transit routes. What specific criteria should be used to identify “mission-critical” software trading at a discount, and how does owning these specialized workflows provide a long-term advantage?

The key to identifying a “mission-critical” bargain is looking for software that manages specialized workflows where the cost to the customer is low but the cost of failure is incredibly high. Constellation Software is the master of this strategy, acquiring hundreds of niche companies that handle vital but “boring” tasks like bus routing or hospital billing systems. These businesses are protected because the chance of a new AI startup successfully replicating thousands of these highly specific, legally-compliant workflows is incredibly slim. When the market panics and treats these specialized utilities the same as a generic sales tracker, it creates a massive opportunity for consolidators to buy them at a discount. Owning these workflows provides a long-term advantage because they are deeply embedded in the operational backbone of an industry, and once the “plumbing” is installed, customers are notoriously reluctant to move years of data to a different provider.

The shift toward autonomous AI agents suggests that software is evolving from a manual tool into a self-managing entity. How does this change the internal cost structures for established software firms, and what are the practical implications for their long-term research and development budgets?

While AI is certainly a threat to some business models, it is also a powerful internal tool that is significantly pushing down the cost of maintaining and developing software. Large firms like Oracle and Constellation Software can now maintain their massive libraries of code with much higher efficiency, potentially leading to rising profit margins even if their top-line growth slows down. This shift allows R&D budgets to be reallocated from manual maintenance and basic coding tasks toward high-end product development and the creation of physical infrastructure, such as the billions Oracle is pouring into data centers. We are also seeing companies like Intuit use AI to move into “live services,” where the software helps find extra tax deductions that users might have missed, effectively paying for itself. This evolution means that while the “manual” part of software creation is getting cheaper, the investment in governance, legal accuracy, and physical digital plumbing is becoming the new focus of the industry’s capital expenditure.

What is your forecast for the SaaS industry?

I believe we are entering a period of extreme divergence where the “utility” players will thrive while “simple tools” will continue to struggle. My forecast is that firms serving as the primary system of record—such as Sage, Intuit, and SAP—will see their margins expand as they integrate AI to save their customers significant time, like the five to ten hours of weekly administrative work Sage is already saving its users. However, we will likely see a continued shakeout for companies that cannot move past the per-licence model, as headcounts at major corporations continue to shrink. In the UK, Experian and Sage look particularly robust because they are built into the very regulatory and financial rules that businesses must follow to operate. Ultimately, the “SaaSpocalypse” is not the end of the industry, but rather a brutal sorting process that will leave us with a smaller group of much more powerful, deeply embedded infrastructure giants that control the essential data and workflows of the global economy.