Vijay Raina is a seasoned authority in the realm of enterprise SaaS and software architecture, bringing a wealth of experience in how modern organizations integrate complex digital tools. As a specialist in software design, he has watched the rapid evolution of developer environments from simple text editors to autonomous agentic ecosystems. In this conversation, we explore the intensifying competition between industry leaders like OpenAI and Anthropic, specifically focusing on how the latest updates to Codex are redefining the boundaries of background automation and developer productivity.

Background agents can now click, type, and open desktop apps on a Mac while a person works on other tasks. How does this parallel workflow change daily developer routines, and what specific safeguards ensure these background agents don’t disrupt critical system settings?

The shift to parallel workflows means a developer is no longer tethered to a single sequential timeline; while you are deep in architectural design, an agent can be opening desktop apps and iterating on front-end changes in the background. This allows for a massive productivity boost where auxiliary tasks, such as testing apps or working in software that lacks a dedicated API, are handled autonomously. To ensure these agents don’t wreak havoc, the system is designed to operate without interfering with the user’s active work in other applications, essentially creating a partitioned digital workspace. Safeguards are centered on this “non-interference” principle, where the agent’s cursor and keyboard inputs are mapped specifically to the background tasks it has been assigned, preventing it from accidentally modifying system settings or interrupting your primary screen focus.

Automated tools now include in-app browsers to command web applications on a local host. What technical hurdles arise when moving from localhost to fully commanding live web environments, and how should developers prepare their front-end architecture to be more “agent-friendly”?

Moving from the controlled environment of a localhost to a live web environment introduces variables like latency, dynamic content loading, and complex security protocols that can trip up an automated agent. When an agent commands an in-app browser, it must accurately interpret the DOM (Document Object Model) to perform clicks and type commands just as a human would. To make front-end architecture more “agent-friendly,” developers should focus on semantic HTML and consistent element naming, which helps agents identify buttons and fields more reliably during game or web development. This transition is critical because, as the technology matures, these tools will need to navigate live production environments to perform real-world testing and deployment tasks without human hand-holding.

Coding assistants are incorporating memory features to recall past sessions and specific user habits. How does this long-term context impact the accuracy of complex code suggestions, and what are the security trade-offs when enterprise data is stored to build this personalized context?

The introduction of “memory” is a game-changer for accuracy because the agent can now recall the specific architectural patterns and “shorthand” a developer used in previous sessions. Instead of starting every prompt from scratch, the assistant builds a personalized context that understands how you specifically solve problems, leading to code suggestions that feel bespoke rather than generic. However, the security trade-offs are significant; storing long-term session data means that sensitive enterprise logic and proprietary habits are now part of a stored profile. Companies must weigh the benefit of this 24/7 “coding buddy” against the risk of having their internal development “DNA” archived in a cloud-based memory bank.

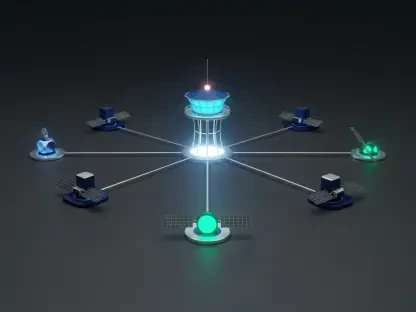

With over a hundred integrations for tools like Slack and GitLab, agents can now organize daily schedules and triage issues. What is the step-by-step process for auditing these automated workflows, and how do you prevent errors when AI manages clerical administrative tasks?

Auditing these workflows begins with a clear mapping of the 111 currently available plug-in integrations, such as CodeRabbit or GitLab Issues, to ensure the agent has the correct permissions for each. The next step is a “human-in-the-loop” verification where the agent generates a to-do list or triages issues, but the user must give a final confirmation before the agent acts on external calendars or Slack channels. To prevent clerical errors, developers should set strict boundaries on the agent’s agency—for example, allowing it to draft a daily schedule based on Google Calendar but not allowing it to delete meetings without a secondary prompt. This creates a safety net where the AI handles the heavy lifting of data gathering while the human retains the final executive decision-making power.

The industry is currently pivoting away from consumer-facing video tools toward specialized enterprise coding utilities. How does a pay-as-you-go model affect budget forecasting for tech departments, and what does this shift suggest about the current profitability of B2B versus B2C AI applications?

The shift toward a pay-as-you-go pricing model for enterprise customers allows tech departments to scale their AI usage based on actual demand, which is much more efficient than paying for high-seat-count licenses that might go underutilized. This move highlights a broader industry retreat from expensive, compute-heavy consumer experiments like Sora 2 and a doubling down on high-value B2B utilities that offer clear ROI through developer efficiency. It suggests that the current path to profitability in AI lies in specialized “blue-collar” coding tools rather than broad, consumer-facing entertainment apps. For a budget manager, this means forecasting becomes a matter of tracking API calls and agentic activity hours, aligning tech spend directly with the volume of code produced and the number of issues triaged.

What is your forecast for AI-coding tools?

I expect that within the next 18 to 24 months, we will see the total disappearance of the distinction between an IDE (Integrated Development Environment) and the AI agent itself. We are moving toward a future where “agentic” capabilities are the default, meaning your computer won’t just suggest the next line of code, but will actively manage your deployment pipelines and administrative overhead in the background while you sleep. The “low-grade war” between major players will settle into a landscape where the most successful tools are the ones that offer the deepest integrations—not just with GitHub, but with the entire suite of corporate communication and project management software. Ultimately, the developer’s role will shift from a “writer of code” to an “orchestrator of agents,” managing a fleet of digital assistants that handle the execution while the human focuses entirely on logic and creative strategy.