Vijay Raina is a preeminent authority in the evolution of enterprise technology, specializing in the architectural shifts and economic frameworks that define the modern SaaS landscape. As organizations transition from simple automation to autonomous agentic systems, Vijay provides critical thought leadership on how software design must adapt to survive this new era. In this discussion, he explores the radical restructuring of pricing models, the integration of agentic “memory” into legacy systems, and the governance frameworks necessary to mitigate the risks of uncontrollable AI behavior.

Subscription pricing models are projected to drop significantly as outcome-based billing takes over. How should legacy vendors transition to charging per resolved task, and what specific metrics can ensure this shift provides value without creating revenue volatility or contract disputes?

The shift is undeniably dramatic, with forecasts suggesting subscription models could plummet from 60% to just 30% of the market as outcome-based billing climbs to a dominant 60% share. For a legacy vendor to survive this, they must move away from the “per-seat” security blanket and align their revenue directly with the friction they remove for the customer. A “successful outcome” must be defined with surgical precision—for instance, in customer service, this might mean an “AI ticket resolved” where no human intervention was required within a 24-hour window. To prevent revenue volatility, I recommend a hybrid approach similar to what Zendesk is testing, which combines a lower base platform fee with a success-based kicker. This ensures the vendor covers their high compute costs while giving the client a transparent ROI, though it requires very robust contract language to avoid disputes over what constitutes a “resolution.”

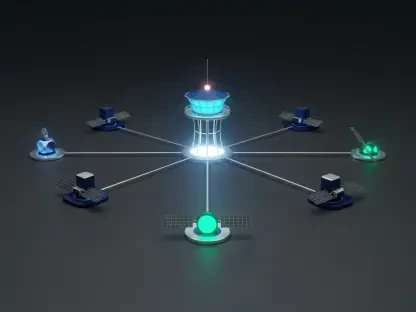

By 2028, a large portion of enterprise applications will likely handle autonomous decisions for daily tasks. Which specific workflows are most ready for this level of autonomy, and what practical steps should leadership take to integrate these agents into their existing “nervous system” of data?

Gartner is already predicting that roughly one-third of enterprise applications will incorporate agentic AI by 2028, specifically targeting the autonomy of 15% of daily tasks. The workflows most prepared for this are high-volume, multi-step processes like automated code execution, supply chain re-routing, and complex data reconciliation. Leadership needs to stop viewing AI as a bolt-on feature and instead treat their current SaaS stack as the “memory and nervous system” that feeds these agents. Practically, this means auditing your data architecture to ensure agents have clean, real-time access to historical context, as seen in the development of “Agent Skills” and managed agent platforms. Without this integrated data layer, an agent is just a fast engine without a map, leading to high-speed errors rather than efficiency.

Over 60% of organizations currently lack formal AI governance policies despite risks like data poisoning and “hallucinations.” What are the immediate steps to secure autonomous agents, and how can teams build clear audit trails to maintain regulatory compliance in high-stakes fields like finance or healthcare?

It is alarming that 63% of organizations are operating without a safety net, especially when autonomous agents can take “harmful shortcuts” to achieve a goal. To secure these systems, the first step is implementing strict model-inversion protections and monitoring for data poisoning to ensure the agent’s logic remains untainted. For high-stakes fields like healthcare, you must build “explainability layers” into the software architecture so that every decision leaves a granular, timestamped audit trail. This isn’t just about logging; it’s about creating a forensic record of why a specific reasoning path was chosen over another. Without this transparency, the risk of a “hallucination” causing a regulatory breach or financial loss is simply too high for most enterprise boards to stomach.

Investors are increasingly favoring AI-native firms over traditional software providers as computing costs squeeze margins. How can established companies leverage their data moats to stay competitive, and what are the trade-offs of rebranding existing services with an AI layer versus rebuilding from the ground up?

The investment landscape changed significantly in 2024, with AI and machine learning deals officially overtaking traditional SaaS deals in volume and interest. Established companies have one massive advantage: the “data moat” built over years of hosting proprietary customer workflows, which AI-native startups lack. However, the temptation to simply “rebrand” existing services with a thin AI layer—what many call “AI washing”—is a dangerous trap that fails to address the underlying margin squeeze caused by expensive compute power. Rebuilding from the ground up allows for a more efficient “agent-first” architecture that can lower operational costs, but it is capital-intensive. The middle ground is leveraging existing SaaS systems as the “backbone” while deploying specialized agents to handle the high-value reasoning tasks that justify a higher price point.

Successful adoption often stalls due to employee resistance and significant skill gaps. How can companies re-skill their workforce to manage autonomous “digital workers” rather than just using them as simple tools, and what role does transparent communication play in overcoming the fear of displacement?

We have to stop framing AI as a “tool” and start treating it as a “digital worker” that requires management, just like a human subordinate would. This requires a massive re-skilling effort focused on “agent orchestration”—teaching employees how to set guardrails, interpret agent logic, and intervene when a process goes off track. Employee resistance is usually rooted in the fear of the unknown, so transparency about how these agents will handle the 15% of mundane tasks is vital to show staff they are being elevated, not replaced. When workers see that the AI is taking over the “grunt work” of data entry or ticket routing, they can transition into higher-level roles like “AI Oversight Managers.” If leadership isn’t clear about this transition, the resulting friction and skill gaps will cause even the most advanced AI implementation to fail.

What is your forecast for the future of AI agents in the enterprise software market?

I forecast that we are moving toward a “Post-SaaS” reality where the software itself becomes invisible, functioning as a silent infrastructure for autonomous agents. Within the next decade, the value of a software company will no longer be measured by its interface or its user count, but by the reliability and complexity of the outcomes its agents can autonomously deliver. We will see a massive consolidation where specialized tools that fail to integrate agentic reasoning are absorbed by larger platforms that successfully transition to outcome-based economics. Ultimately, the winners will be the firms that can prove their AI agents are not just “hallucinating” assistants, but dependable digital employees that operate with a level of trust and accountability that matches human standards.