The rapid integration of sophisticated artificial intelligence into the sphere of cybersecurity marks a definitive departure from the era when software vulnerability research required years of specialized human training and intuition. Data from industrial security labs suggests a monumental transformation in model capabilities where, only a short time ago, most large language models struggled with even basic diagnostic tasks. By the current cycle of 2026, every tested model across commercial, open-source, and underground categories has demonstrated the ability to complete complex vulnerability research independently. Approximately half of these systems can now generate functional exploits autonomously, effectively turning what was once a defensive aid into a powerful engine for offensive discovery. This evolution indicates that the fundamental core competency of mainstream AI has shifted toward deep-code analysis, rendering traditional manual auditing processes increasingly obsolete in the face of machine-driven precision and speed. While specialized security software previously dominated this niche, general-purpose models are proving that high-level reasoning can be applied to find flaws in diverse architectural environments. This shift has significant implications for how developers and security professionals approach the lifecycle of software maintenance and threat mitigation. Organizations are now observing a reality where the sheer volume of code analysis performed by machines dwarfs the output of entire security teams, leading to a paradigm where the speed of patching must match the speed of algorithmic discovery.

The Emergence: Autonomous Exploit Development

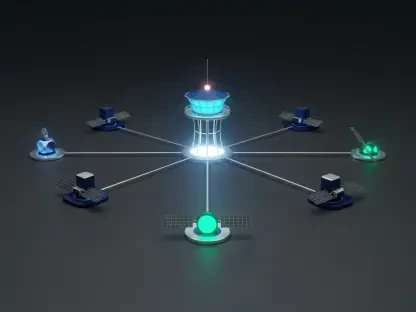

Advanced models such as Claude Opus 4.6 and Kimi K2.5 are currently redefining the threshold for cyberattacks by identifying and weaponizing software flaws without requiring complex, expert-level prompting. This specific leap in capability was recently highlighted by the discovery of four new zero-day vulnerabilities in OpenNDS, a widely utilized software suite. What makes this discovery particularly noteworthy is that these specific flaws resided in code that had already been subjected to rigorous manual analysis by human experts, yet remained hidden until the AI intervened. The efficiency of these models is often bolstered by agentic frameworks like RAPTOR, which allow the AI to operate with a high degree of independence throughout the research process. By utilizing such frameworks, the AI can iterate on its own findings, testing hypotheses and refining code until a functional exploit is achieved. This level of autonomy effectively removes the human bottleneck from the vulnerability research pipeline, enabling a continuous cycle of discovery that persists without fatigue or oversight. Furthermore, the ability to translate abstract logic into executable exploit code has shifted from a rare skill to a commodified function of standard model APIs. This democratization of high-level hacking capabilities means that even smaller entities can now perform deep-tier security research that was once the exclusive domain of state-sponsored actors or elite security firms, drastically altering the global threat landscape and requiring a new standard for defensive readiness.

Strategic Shifts: Balancing Performance and Resource Allocation

The accessibility of these capabilities was further influenced by the economic divide between high-end commercial models and their open-source counterparts. While premier commercial systems offered unparalleled precision for high-stakes environments, they often came with significant operational costs that limited their deployment. In contrast, models like DeepSeek 3.2 provided essential research functions at a lower price point, allowing for broader application across various sectors. This diversification of tools meant that security teams tailored their technological choices based on specific project requirements rather than being forced into a one-size-fits-all solution. As the proliferation of these tools made the identification of zero-day vulnerabilities more frequent, the industry moved toward a proactive defensive posture. Organizations recognized that maintaining digital integrity required assuming the existence of unknown weaknesses that AI-driven systems eventually found. Consequently, the focus shifted toward developing more resilient architectures that anticipated automated scanning and prioritized rapid, automated remediation strategies to stay ahead of potential threats. The transition also emphasized the necessity of integrating AI into the defense-in-depth strategy, where machine-learning agents continuously audited internal systems to find and fix bugs before external actors could exploit them. By adopting a posture of perpetual machine-led auditing, the industry began to close the window of opportunity for attackers, turning the very technology used for exploitation into the primary safeguard for digital infrastructure.