Vijay Raina is a distinguished expert in enterprise SaaS technology, software design, and architectural strategy. With years of experience guiding startups through the treacherous waters of early-stage development, he specializes in helping technical founders balance engineering excellence with the brutal realities of the market. In this conversation, Raina breaks down the common technical traps—from premature microservices to “gold-plating” infrastructure—that often lead to startup failure before product-market fit is ever achieved.

The following interview explores why “boring” technology is often a competitive advantage, how to implement a learning budget within engineering sprints, and the specific architectural decisions that preserve a startup’s most valuable asset: its runway.

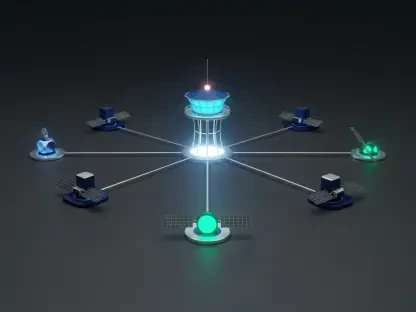

Companies like Segment and Amazon Prime Video eventually reverted from microservices to monolithic architectures to reduce complexity. When should a small engineering team prioritize modular monoliths over distributed systems, and what specific operational overhead risks are often ignored during the early development phase?

A small engineering team should almost always prioritize a modular monolith until they have at least 50 developers or a very mature product that requires independent scaling for specific components. The operational overhead risks that founders often ignore include the massive “tax” of managing multiple deployment pipelines, complex inter-service communication, and the nightmare of distributed debugging where a single user request might fail across five different services. To transition correctly, a team should first focus on clear domain boundaries within a single codebase so that services are logically separated but physically deployed together. This approach allows you to iterate on product features weekly without being eaten alive by the need for constant health checks and infrastructure orchestration. Once you hit a wall where a specific domain truly needs its own resources—and you have the data to prove it—only then should you incur the cost of breaking it out into a standalone service.

Research shows developers spend roughly 30% of their time on maintenance rather than new features, costing billions annually. How can founders distinguish between essential stability and “gold-plating” infrastructure, and what managed services should they prioritize to ensure they can charge customers within the first 30 days?

Founders can distinguish essential stability from “gold-plating” by asking a simple question: “Will a customer pay for this specific technical improvement today?” If you are spending months perfecting a bespoke Kubernetes cluster or a hand-rolled authentication system instead of shipping a functional prototype, you are procrastinating under the guise of best practices. To hit that 30-day window for charging customers, founders should prioritize managed services for everything that isn’t their core value proposition, such as using Heroku for hosting or Stripe for payments. The goal is to maximize the “Developer Coefficient” by ensuring every hour of engineering time is spent on the 70% of work that actually moves the needle on product-market fit. We’ve seen that 20% of startups now charge their first customer within a month of incorporation by ignoring the urge to build custom infrastructure and instead leveraging off-the-shelf tools that provide an immediate feedback loop.

PostgreSQL handles most SaaS needs, yet teams often jump to exotic or multi-database setups prematurely. Under what circumstances do hyper-normalized schemas become a liability for iteration speed, and how does using JSON columns provide a middle ground for data that changes shape frequently?

Hyper-normalized schemas become a major liability when your product requirements are changing weekly, and every small feature update requires a complex migration across 300 rigid tables with strict foreign key constraints. This rigidity slows down your survival speed because you spend more time managing database state than you do testing new hypotheses with real users. Using JSON columns in PostgreSQL offers a vital middle ground because it allows you to store semi-structured data that can evolve without a schema change, giving you the flexibility of a document store like MongoDB while keeping the transactional integrity of a relational engine. For an MVP, I recommend sticking to one database and using simple schemas that lean on these JSON fields for any data that is likely to change shape. You should only move to specialized databases like Elasticsearch or Redis when your total user base exceeds a few dozen beta testers and your query patterns actually demand that level of performance.

With a median runway of 22 months from fundraise to failure, how should technical founders implement a “learning budget” for each sprint? What specific metrics or customer behaviors should dictate whether a feature is built or deleted from the backlog to avoid the trap of constant building?

To implement a learning budget, a team must define the specific assumption they are testing and the metric that will confirm or reject it before a single line of code is written for a new feature. If a proposed task doesn’t map to a testable user behavior—such as a user completing an onboarding flow or clicking a specific “pay” button—it should stay at the bottom of the backlog, regardless of how much an engineer wants to build it. Founders should look for red flags like having a backlog of 200 items while having spoken to fewer than 20 potential customers, as this indicates you are optimizing code paths that nobody will ever use. A healthy sprint should be judged by how much was learned about the ideal customer’s struggles, leading you to delete one planned feature for every one you build. This discipline ensures that you don’t run out of your 22-month runway polishing a sophisticated CI/CD pipeline for a product that has zero paying users.

Established frameworks like Ruby on Rails or Django power massive platforms like Shopify and Basecamp. Why does “boring” technology often lead to faster product-market fit than trendy, unproven stacks, and how can a team determine if a new technology is a competitive advantage or a distraction?

“Boring” technology leads to faster product-market fit because it comes “batteries-included,” handling mundane but essential tasks like authentication, database migrations, and admin panels out of the box so you don’t have to. When you choose a trendy stack with only 200 GitHub stars, you inevitably hit roadblocks where documentation is missing or basic libraries don’t exist, forcing your team to waste weeks solving solved problems. A team can determine if a new technology is a distraction by asking: “Will my customers actually notice this choice in their daily experience?” If you are building a real-time collaboration tool, a cutting-edge framework for live updates is a competitive advantage; if you are building an invoicing app, using a bleeding-edge database is just pure risk with no customer benefit. Sticking to proven tools like Rails or Django allows you to focus your limited engineering creativity on solving the unique problems your customers are actually willing to pay for.

Do you have any advice for our readers?

The most successful technical founders I’ve seen are the ones who embrace the “Build-Measure-Learn” cycle with radical honesty. My advice is to audit your current development sprint today and count how many tasks are infrastructure-related versus features requested by a paying customer; if the infrastructure work dominates, you need to stop and shift your focus. Don’t wait for technical perfection to launch, because you can’t buy back the months spent building the wrong thing with a “perfect” architecture. Ship what you have within eight weeks, put it in front of people who might actually pay for it, and let their behavior dictate your next move—scale only when the growth starts to hurt.