A single contract clause can bend an industry’s trajectory, and the Microsoft–OpenAI reset did exactly that by trading indefinite, AGI-triggered exclusivity for a calendar-bound deal that unlocked multi-cloud distribution and defused a brewing clash with Amazon. This roundup brings together views from CIOs, cloud economists, product leaders, compliance officers, and model engineers to explain what changed, why it matters now, and how to navigate the next phase of AI infrastructure strategy.

From AGI-Tied Exclusivity to a Countdown Clock: Context, Stakes, and a Map for What Follows

Across enterprise circles, the dominant reaction is relief mixed with resolve. CIOs describe a shift from contractual fog to usable daylight: a nonexclusive license through 2032 replaces slippery AGI milestones and sets a predictable horizon for platform planning. Product leaders frame it as a distribution unlock that shrinks rollout gaps across clouds, even if exact timing still hinges on “first on Azure” details.

Cloud strategists add that the move neutralized a legal tinderbox created by OpenAI’s commitments to Amazon. By normalizing cross-cloud delivery, the parties traded maximal control for broader reach and less litigation risk. Analysts reading the fine print emphasize one throughline: predictability boosts adoption, and adoption reshapes power.

Inside the Reset: How the 2032 License, ‘Primary on Azure,’ and Multi-Cloud Freedom Reshape the Field

Replacing Forever With a Finish Line: The 2032 Nonexclusive License, ‘First on Azure’ Ambiguity, and Shifting Revenue Flows

Legal advisors laud the calendar endpoint as governance-friendly and financeable; undefined AGI triggers had been a magnet for disputes. Procurement teams say the 2032 horizon lets them ladder multi-year commitments without betting on speculative milestones.

Revenue mechanics are read as a quiet win for Microsoft and a pragmatic pivot for OpenAI. Economists note the end of Microsoft’s outbound revenue share and a capped inbound share from OpenAI through 2030, layered atop Microsoft’s sizable equity stake. The “first on Azure” clause, however, draws split views: some hear a brief exclusivity window; others hear simple sequencing. Either way, it signals investor-grade priority without shutting the multi-cloud door.

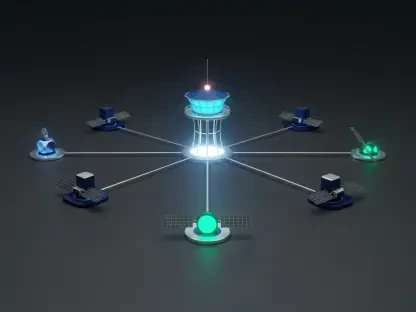

Ending the Amazon Clash: AWS Bedrock, Frontier Hosting, and the Normalization of Cross-Cloud Delivery

Observers close to AWS see validation: OpenAI can meet Bedrock obligations and collaborate on stateful runtime without tripping past exclusivities. That clarity unlocks joint roadmaps for regulated workloads seeking parity across cloud estates.

Vendor-neutral architects argue the bigger story is cultural. Once a flagship lab commits to cross-cloud distribution, it makes single-cloud roadmaps look dated. What began as a truce now reads as precedent: launch early on Azure, then expand; pressure mounts for near-synchronous availability elsewhere.

Agents Need State: Why ‘Stateful Runtime’ Tilts the Infrastructure Race and What That Means for Buyers

Model engineers converge on one point: agentic systems rise or fall on state management. Long-lived memory, tool access, and event-driven loops demand durable orchestration, not just stateless API calls. That is why stateful runtime became a bargaining chip—it defines where high-value workloads live.

Infrastructure leads highlight buyer impact. If state is portable, multi-cloud arbitrage gets real; if state is sticky, the first platform to productize agent runtime can capture gravity. The reset keeps Azure in pole position while allowing AWS to contend on native agent tooling, pushing Google and others to harden their own agent stacks.

Money, Leverage, and Hedges: Equity Stakes, Capped Revenue Share, and Microsoft’s Parallel Bets With Other Model Labs

Investors see a classic barbell: Microsoft gives up exclusivity yet tightens financial alignment via equity and inbound share, cushioning any Azure leakage as OpenAI expands elsewhere. OpenAI, for its part, widens market reach while keeping Azure as its primary cloud, preserving scale economics.

Strategists call out hedging on all sides. Microsoft courts additional model labs to power its own agent ambitions; OpenAI diversifies infrastructure and channels. The lesson, according to procurement advisors, is simple: plan for a rotating cast of model providers and runtime services, not a monolith.

What to Do Now: Playbooks for CIOs, Product Leaders, and Cloud Strategists

CIOs in regulated industries recommend setting a two-lane roadmap: standardize on Azure for early access and support depth, while validating equivalent stacks on AWS (and a tertiary cloud) for resilience and negotiation leverage. Contract clauses should capture portability of state, exit rights from agent runtimes, and clear SLAs for cross-cloud latency and data residency.

Product leaders urge teams to separate concerns: pick models by task fit, pick clouds by data gravity, and pick runtimes by state guarantees. Security leaders add that the control plane deserves its own review—key management, audit trails, and policy enforcement must operate consistently even as inference endpoints shift.

Cloud strategists advise updating TCO models. Account for egress on agent state, cross-cloud caching, and managed memory stores; price in data and policy duplication. Pilot with representative agent workloads, not toy prompts, and treat “first on Azure” as a sequencing risk to be mitigated with feature flags and abstraction layers.

Beyond the Truce: Durable Implications for Competition, Governance, and the Multi-Cloud AI Era

Competition watchers judge the reset as a structural turn toward multi-cloud AI, with agents as the organizing workload. As stateful runtime becomes product category rather than feature, hyperscalers will race to anchor data, memory, and policy stores that convert workloads into long-term platform commitments.

Governance specialists see clarity replacing mythology. Dropping AGI-based triggers in favor of a calendar term reduced ambiguity, disciplined capital planning, and simplified oversight. Yet one caveat remains: “first on Azure” still lacks public specificity, so rollout choreography will continue to test vendor claims.

For readers seeking depth, the most helpful next steps were to track cloud provider announcements on agent runtimes, review emerging benchmarks for stateful workloads, and study vendor security attestations around memory, lineage, and policy enforcement. In practice, portfolio thinking beat vendor loyalty, procurement language around portability paid dividends, and pilots that measured state behavior under load separated marketing from material capability.