The traditional boundaries of enterprise cloud computing are dissolving as Google Cloud orchestrates a fundamental pivot toward an era defined by autonomous agentic intelligence. This transition represents far more than a routine software update or the introduction of a new product line; it is a profound reset of how enterprise architecture functions at its most basic level. By leveraging a heritage of deep engineering DNA and deploying massive capital expenditures, Google is attempting to solve the fragmentation that has plagued the modern data stack for over a decade. The shift focuses on moving away from reactive “systems of record” toward proactive “systems of agency” that do not just store or analyze data but actively execute complex business processes with minimal human intervention. This evolution is necessitated by the growing complexity of global markets, where the speed of human decision-making has become a bottleneck that only autonomous, vertically integrated AI can resolve effectively.

This strategic evolution is underpinned by a commitment to vertical integration that spans from custom-designed silicon to high-level application frameworks. As businesses grapple with the limitations of legacy systems, the demand for a cognitive engine capable of operating at machine scale has never been higher. Google Cloud is positioning itself as the primary architect for this new reality, offering a unified environment where intelligence is not an add-on but a foundational element of the infrastructure. This approach allows for a level of scalability and reliability that was previously unattainable, enabling enterprises to transition their most critical workflows into autonomous loops. By focusing on the intersection of high-performance computing and generative intelligence, the company is redefining the value proposition of the hyperscale provider in a world that no longer views simple data storage as a competitive advantage.

The Structural Evolution of Cloud Architecture

Moving Toward Autonomous Decision Loops: The Death of Passive Insights

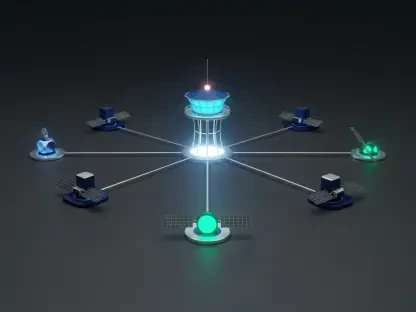

The era of the “modern data stack,” which focused heavily on centralizing data for human review via dashboards and static reports, is rapidly reaching its natural conclusion. In the previous technological cycle, the ultimate goal of data engineering was to provide a human actor with the information necessary to make a decision, a process that inherently introduced latency and subjective error. Today, the industry is witnessing a tectonic shift toward autonomous decision loops where AI agents are empowered to take direct action within defined parameters. These agents operate at a scale and velocity that render human-centric systems obsolete, requiring an entirely different architectural foundation. For an agent to be effective, it must possess the ability to perceive changes in the environment, reason through possible outcomes, and execute a response across multiple integrated platforms without waiting for manual approval.

This transition creates a massive technical challenge for organizations that are currently hampered by fragmented governance models and batch-processed data pipelines. Legacy architectures were typically designed around a perimeter-based security model and siloed data repositories, both of which are incompatible with the requirements of an autonomous agentic system. An AI agent requires a continuous stream of real-time data and a security framework that can manage machine-to-machine trust with high granularity. To address this, Google Cloud is championing a unified environment where the cognitive engine—the large language model—is situated within the same trusted boundary as the transactional and analytical data. This proximity reduces the friction of data movement and ensures that the agent can act on the most current information while adhering to strict compliance and safety protocols that are baked into the core infrastructure.

The implementation of these autonomous loops is forcing a reimagining of how software itself is constructed and deployed. Instead of rigid code that follows a predetermined path, developers are now building systems that are guided by intent and high-level objectives. This shift requires a robust orchestration layer that can manage the handoffs between different specialized agents, ensuring that a complex task like supply chain optimization or real-time fraud mitigation is handled with precision. By providing the tools to build these closed-loop systems, Google is enabling enterprises to move beyond the limitations of human-scale operations. The result is a more resilient and responsive business model that can adapt to market fluctuations in milliseconds, fundamentally changing the competitive landscape for any organization that relies on data-driven execution.

The Power of Full-Stack Integration: Controlling the Silicon to Software Pipeline

Google Cloud possesses a structural advantage that is increasingly difficult for competitors to replicate, rooted in its control over the entire technology stack from the physical hardware to the final user interface. While many other cloud providers rely on a patchwork of third-party vendors and external partnerships to build their AI offerings, Google has spent decades refining a vertically integrated pipeline. This control starts at the compute layer with proprietary Tensor Processing Units (TPUs) and extends through a global, low-latency fiber-optic network that was designed specifically to handle the massive data requirements of web-scale services. In the context of agentic AI, this integration is critical because the performance of an autonomous agent is often limited by the slowest link in the chain, whether that is data retrieval speeds or the latency of model inference.

In a highly complex agentic environment, the bottlenecks are not static; they shift dynamically between data movement, memory bandwidth, and the financial cost of processing individual tokens. Google’s ability to optimize across these variables in a synchronized fashion allows it to tune infrastructure for specific high-performance workloads in a way that generalized platforms cannot. For instance, by integrating its high-performance Spanner database directly with Vertex AI, the company enables agents to access and update transactional records with almost zero overhead. This eliminates the “glue work” that typically consumes significant engineering resources, allowing organizations to focus on the logic of their agents rather than the plumbing of their data systems. This end-to-end control is the primary reason why Google can offer superior performance-per-watt and more predictable pricing for massive AI deployments.

Furthermore, this full-stack approach provides a unique level of security and identity management that is essential for enterprise-grade AI. When an agent acts on behalf of a human, the potential for unauthorized access or data leakage increases exponentially if the system is composed of multiple disparate platforms. By maintaining a unified surface for security policy enforcement, Google ensures that every action taken by an agent is logged, audited, and constrained by the same identity and access management protocols that govern human users. This holistic view of the system allows for more sophisticated threat detection and mitigation, as the infrastructure can recognize anomalous agent behavior that might be invisible to a more fragmented setup. Ultimately, the power of full-stack integration lies in its ability to provide a seamless, high-velocity environment that is as secure as it is capable.

Capital and Financial Engines of Growth

Strategic Investment in Infrastructure: The Race for Scale

The ongoing “arms race” in the hyperscaler market is characterized by a level of capital expenditure that was once considered unimaginable, with Google leading the charge. Alphabet’s current spending cycle is projected to approach $200 billion, a figure that highlights the sheer scale required to build and maintain the infrastructure for an agentic world. This capital is not being deployed blindly; it is a calculated investment in the physical and virtual assets that will define the next decade of technological dominance. From massive data center campuses that span hundreds of acres to the undersea cables that connect continents, the goal is to create a global compute fabric that can support the training and deployment of ever-larger and more sophisticated frontier models. This level of investment creates a high barrier to entry, effectively narrowing the field to a handful of players who have the balance sheets to compete.

A critical component of this infrastructure strategy is the bifurcation of compute resources between industry-standard NVIDIA GPUs and Google’s own proprietary TPUs. By maintaining a mature, internal silicon roadmap, Google has protected itself from the supply chain volatility and pricing fluctuations that have impacted other firms in the industry. These TPUs are not just a hedge against scarcity; they are highly specialized accelerators that are often more efficient for the specific training and inference tasks required by agentic workflows. This dual-source strategy ensures that Google can satisfy the diverse demands of its customer base—providing the latest NVIDIA hardware for those who need it while offering its own high-performance alternatives for those looking to optimize their cost-to-performance ratios. This flexibility is a key differentiator in a market where compute capacity is often the primary constraint on innovation.

The market has generally viewed this aggressive spending as a necessary prerequisite for long-term growth, especially given the “cash machine” nature of Google’s core advertising business. Unlike startups or less diversified technology companies that might face immediate liquidity pressures from such high Capex, Google can leverage its existing revenue streams to fund this massive infrastructure transition. This financial cushion allows the company to take a long-term view, investing in the fundamental research and development needed to solve the hardest problems in AI. Investors have rewarded this approach, seeing the buildup of physical infrastructure as a tangible asset that will generate recurring revenue for years to come. As the demand for autonomous agents grows, the providers who own the most efficient and scalable infrastructure will be the ones who capture the lion’s share of the emerging market value.

Market Performance and Revenue Gains: Proving the Economic Model

Google Cloud is currently operating at a run-rate of approximately $72 billion, a milestone that signals its transition from a high-growth experiment to a mature, profitable pillar of the Alphabet ecosystem. While it still trails the total revenue figures of the market leaders, the business is demonstrating significant operating leverage, with margins that continue to improve even as investment scales. This financial trajectory is a clear indication that the company’s focus on high-value AI services is resonating with the market. The growth is not merely a reflection of general cloud adoption but is increasingly driven by a “flight to quality” among enterprises that require the sophisticated engineering and data capabilities that Google provides. As the market for IaaS and PaaS matures, the ability to offer differentiated, intelligence-driven services is becoming the primary driver of new revenue.

Current projections for the infrastructure and platform services market suggest that Google Cloud will continue to grow at a mid-40% rate through the next several quarters, potentially reaching a $42 billion infrastructure business by the end of 2026. While the absolute incremental revenue of competitors like AWS and Azure remains larger due to their existing scale, Google is effectively acting as a “share taker” in the most critical growth segments. This is particularly evident in the high-end enterprise market, where organizations are moving beyond simple cloud migration toward the total transformation of their business processes. By positioning itself as the leader in the agentic AI space, Google is capturing a larger percentage of the high-margin budgets that are being reallocated from traditional software and services toward autonomous systems.

This momentum is further validated by the increasing concentration of the cloud market among the top three providers, with Google successfully cementing its position as an essential choice for any multi-cloud or AI-first strategy. The narrative that Google is a “distant third” is rapidly fading as the company’s technical advantages in AI translate into tangible financial results. The stability of the business, combined with its high growth rate, provides a compelling case for its long-term viability in a competitive landscape. As the unit of value in the cloud shifts from the virtual machine to the autonomous outcome, Google’s history of optimizing for massive scale and complex workloads is proving to be a decisive advantage. The financial success of the platform is a testament to the fact that technical excellence, when backed by significant capital, can eventually overcome the head start of early market entrants.

Quantifying Market Sentiment and AI Momentum

Customer Spending Trends and Net Scores: Measuring Real-World Adoption

Analyzing market sentiment through metrics such as “Net Scores” reveals a clear picture of Google Cloud’s rising influence within the enterprise IT landscape. A Net Score, which subtracts negative signals like churn or spending decreases from positive signals like new adoptions or budget increases, currently places Google Cloud in a “highly elevated” momentum zone. This data suggests that the platform has moved past the stage of experimental interest and is now being integrated into the core operational budgets of major global corporations. This shift is critical because it indicates a level of trust and permanence that was less certain in previous years. IT leaders are no longer just testing Google’s AI tools; they are committing significant long-term resources to the platform, viewing it as a primary engine for their own digital transformation.

The growth Google is experiencing is notably driven by expansion within its existing customer base, a hallmark of a maturing and durable technology platform. When an enterprise increases its commitment to a specific cloud provider, it signals that the initial projects were successful and that the platform’s ecosystem is broad enough to support diverse use cases. This internal expansion is often more profitable and sustainable than new logo acquisition, as it indicates a deep integration into the customer’s business processes. The data shows that account penetration has been on a steady upward trajectory since early 2023, coinciding with the surge in practical applications for generative AI. Enterprises are consolidating their AI initiatives on Google Cloud because it offers the most coherent path from research and development to full-scale production.

Furthermore, the “pervasion” of Google Cloud—the percentage of total enterprises that have at least some footprint on the platform—is reaching new heights. This increasing presence in the enterprise estate is a leading indicator of future growth, as it provides a foundation for the upselling of more advanced services. As organizations become comfortable with Google’s core infrastructure, they are more likely to adopt its sophisticated AI and data analytics tools. The feedback from IT decision-makers indicates that Google’s reputation for solving the most difficult engineering problems is a major factor in their selection process. In an era where the complexity of AI can be overwhelming, the perceived technical depth of a provider becomes a primary competitive differentiator, allowing Google to win business even in accounts that were traditionally dominated by other hyperscalers.

The Gemini Edge and AI Velocity: Vertical Value in the Model Era

The most striking trend in current spending data is the massive acceleration of investment specifically directed toward machine learning and AI, where Google’s momentum is significantly higher than general cloud trends. This surge in spending velocity is largely attributed to the successful rollout and integration of the Gemini model family. Google remains the only hyperscaler that possesses its own proprietary, top-tier frontier model that is natively integrated into its entire cloud ecosystem. While competitors often act as distributors for third-party models, Google’s ownership of the Gemini architecture allows it to offer a level of vertical value that is unique in the market. This includes the ability to optimize the model’s performance on specific hardware and to provide deep integration with other cloud services like BigQuery and Workspace.

This vertical integration provides a more seamless and cost-effective experience for enterprises that are looking to move beyond simple chat interfaces to sophisticated agentic applications. Because Google controls both the model and the infrastructure it runs on, it can offer more flexible pricing models and better performance guarantees than providers who must pay a “middleman tax” to model developers. This economic advantage is increasingly important as companies look to scale their AI initiatives from small pilot programs to global deployments. The spending signals indicate that a majority of customers who are engaging with Google’s AI tools plan to significantly increase their investment, a clear sign that they are finding tangible value in the “Gemini edge.” This isn’t just about the quality of the model’s outputs; it’s about how easily that intelligence can be woven into the fabric of the enterprise.

Moreover, the velocity of AI adoption is being fueled by Google’s ability to operationalize these models through platforms like Vertex AI. By providing a comprehensive suite of tools for model tuning, deployment, and monitoring, Google is lowering the barrier to entry for companies that do not have thousands of specialized AI engineers. This democratization of high-end intelligence is a key part of the company’s strategy to win the agentic era. The market is rewarding this approach with high budgetary allocations, as IT leaders recognize that the success of their AI initiatives depends on more than just the underlying model. It requires a robust, integrated platform that can handle the complexities of data governance, security, and real-time execution. Google’s position at the top of the AI spend momentum charts is a direct result of its ability to deliver this complete package to the enterprise.

Positioning for the Agentic Future

Comparative Peer Analysis: Navigating the Competitive Landscape

When evaluating the competitive landscape of 2026, it is clear that while Microsoft Azure and AWS maintain the largest overall footprints, the qualitative nature of the competition has changed. Microsoft continues to leverage its deep integration with the Windows and Office ecosystems to drive AI adoption, while AWS remains the standard for broad infrastructure and a massive partner ecosystem. However, Google Cloud has successfully carved out a unique position as the leader in “engineering-first” AI, attracting organizations that require the highest levels of performance and customizability. The gap in total account penetration is closing, as Google’s momentum in the AI segment acts as a powerful “wedge” to enter accounts that were previously locked into other providers. The market is increasingly seeing Google not as a secondary option, but as a specialized powerhouse for high-stakes autonomous workloads.

This shift in perception is evidenced by the way enterprises are now structuring their multi-cloud strategies. In the past, Google was often used for specific, isolated tasks like data analytics or specialized machine learning projects. Today, it is increasingly being chosen as the primary cloud provider for the entire “intelligence layer” of the business. This means that even if a company keeps its legacy systems on AWS or Azure, it is building its next-generation agentic applications on Google Cloud. This “best-of-breed” approach favors Google’s strengths in vertical integration and proprietary silicon, as these are the factors that most directly impact the ROI of an AI initiative. The data suggests that as the market matures, the technical nuances of the underlying platform are becoming more important to the C-suite than historical relationships or general-purpose offerings.

Specialized players like CoreWeave or Oracle also play a role in the market, but they tend to focus on specific niches—CoreWeave on raw GPU capacity and Oracle on its strong heritage in database systems. Google’s advantage lies in its ability to offer both the specialized high-performance compute and a broad, integrated platform of services. This makes it a more versatile partner for large enterprises that need to balance innovation with operational stability. By cementing its place as the essential third option, Google has fundamentally disrupted the duopoly that once defined the cloud industry. The competitive battle is no longer just about who can provide the most virtual machines; it is about who can provide the most intelligent and autonomous business outcomes.

Core Strategy and Future Benchmarks: From Tools to Outcomes

The overarching strategy for Google Cloud is defined by its transition from a provider of specialized tools to a provider of tangible business outcomes. This shift is visible in the way the company is rethinking its service offerings and pricing models, moving toward a focus on measurable results such as reduced operational latency or improved decision accuracy. The “Agentic Data Cloud” is the ultimate expression of this strategy, representing a future where transactional data, real-time analytics, and autonomous AI are integrated into a single, seamless environment. This eliminates the traditional barriers between “knowing” and “doing,” allowing businesses to act on information as soon as it is generated. The goal is to provide a “closed-loop” system where the enterprise can function with a level of agility that was previously impossible.

As the industry moves forward, the primary benchmark for success will be the ability of these AI systems to deliver a clear return on investment. The market is moving beyond the “honeymoon phase” of AI experimentation and is now demanding proof of productivity gains and cost savings. For Google, this means moving beyond impressive technical demonstrations to documented case studies of large-scale, autonomous deployments that have fundamentally improved a company’s bottom line. Success will also depend on the maturity of the ecosystem; Google must continue to build a robust network of partners who can help customers implement and manage these complex systems. This involves providing out-of-the-box governance frameworks and deployment blueprints that make it easier for a wider range of companies to reach production.

Ultimately, the measure of Google’s dominance in the agentic era will be its ability to simplify the immense technical burden that currently accompanies AI deployment. If the company can make building an autonomous business as easy as building a website was in the previous era, it will secure a central role in the global economy for decades to come. This requires a relentless focus on usability, reliability, and security, ensuring that the power of its engineering DNA is accessible to everyone, not just those with massive technical teams. By focusing on the outcome rather than the tool, Google is positioning itself as the indispensable architect of a new kind of enterprise—one that is intelligent, autonomous, and capable of operating at the speed of thought.

In the preceding analysis, the transformation of the cloud landscape into an agentic-first environment was examined through the lens of Google Cloud’s technical and financial strategy. The investigation showed that the traditional reliance on human-led dashboards has been superseded by a demand for autonomous decision loops that require the deep vertical integration and proprietary silicon advantages that Google has cultivated. Financial data and market sentiment indicators confirmed that this “engineering-first” approach has translated into significant revenue growth and market share gains, positioning the company as a formidable challenger to the established leaders. As the market matured, the focus shifted from the mere provision of compute resources to the delivery of verifiable business outcomes driven by the Gemini model family and the Vertex AI platform. These developments collectively indicated that the competitive landscape now rewards providers who can offer a unified, secure, and highly optimized environment for machine-scale execution.

To capitalize on this momentum and navigate the complexities of the agentic era, organizations should focus on the following actionable steps and strategic considerations. First, it is essential to move beyond the “modern data stack” and begin re-architecting for real-time, closed-loop systems that minimize the latency between data acquisition and autonomous action. This requires a rigorous assessment of current data governance and security models to ensure they can support the high-velocity, machine-to-machine interactions typical of AI agents. Second, enterprises should prioritize platforms that offer vertical integration, as the ability to optimize from the silicon to the application layer will be the primary driver of cost-efficiency and performance in the coming years. Finally, leadership teams must shift their evaluation metrics from technical milestones to business outcomes, demanding that AI initiatives demonstrate a clear and measurable impact on operational productivity and market responsiveness. By focusing on these areas, businesses can ensure they are not merely adopting new tools but are fundamentally evolving to thrive in an autonomous future.