RAG has become the new nerve center of enterprise SaaS, where the fastest answers now travel through the riskiest routes across proprietary data and permissive prompts that do not forgive weak retrieval controls, and that collision between utility and exposure is forcing a reset of how security boundaries are drawn and enforced. Vendors and adopters are racing to align LLM-powered assistants with data that actually matters—contracts, tickets, design docs, runbooks—only to discover that the gating function is no longer the app but the retrieval path itself.

Enterprises have already crossed the threshold from model-centric pilots to production systems that fuse search and generation. The winners are seeing double-digit gains in time-to-knowledge, faster resolution in support, higher sales productivity, and stronger developer velocity. Yet the same pipelines have surfaced novel failure modes: indirect prompt injection, knowledge base poisoning, cross-tenant leaks, and vector database exposures. These are not theoretical concerns; they emerged in live deployments, reshaping risk budgets and procurement criteria. The report tracks how markets are converging on a zero-trust stance for retrieval, why vectors now count as regulated data, and how cloud-native controls can anchor safe-by-default RAG.

Industry Overview

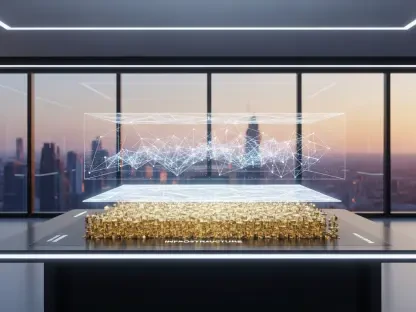

RAG in enterprise SaaS attaches LLMs to the corpus that defines the business: CRM records, ERP exports, intranet pages, source code, and knowledge centers. The architecture typically follows three stages. First, ingestion and embedding transform raw documents into chunked vectors enriched with metadata such as source, tenant, and entitlements. Second, storage and retrieval persist those vectors in a specialized index and return the closest neighbors for a given query. Third, generation and orchestration assemble a prompt with retrieved context and instruct the model to produce an answer grounded in that evidence.

This end-to-end view elevates retrieval from a convenience to a control plane. The model’s output is only as safe as the content presented to it, which means access rights and data hygiene must be enforced before the model ever reasons. Properly applied, this yields the upside enterprises want—faster answers and fewer escalations—while satisfying compliance expectations for minimization, auditable deletion, and policy adherence. The balancing act defines the current state: ambitious adoption tempered by a sharper focus on retrieval-time safeguards.

Market Analysis: Trends, Risks, and Investment Signals

The market is pivoting from prompt-only defenses to defense-in-depth that spans ingestion, retrieval, and generation. Retrieval-time authorization has moved from a best practice to a gating requirement in RFPs, with document- and row-level ABAC or RBAC baked directly into vector queries. Data minimization at ingest—via DLP-driven redaction and pseudonymization—has become normalized, reducing the blast radius if retrieval or generation misfires. Vector stores are now treated like sensitive databases, complete with private networking, encryption, and tightly scoped service accounts.

Operational guardrails are maturing as well. Enterprises are separating agent policies from model prompts, scanning inputs and outputs, and attaching provenance signals to retrieval results. Continuous evaluation practices—measuring groundedness, faithfulness, and source alignment—are being operationalized as CI for AI, helping teams detect drift, poisoning, and permission errors before customers do. Cloud providers, vector DB vendors, and security platforms are converging on integrated stacks that simplify posture management for AI workloads, favoring cloud-native controls over bolt-on tools.

Adoption data shows concentration in customer support assistants, developer copilots, sales enablement, ops automation, and analytics Q&A. Deployments typically blend proprietary sources with vetted public content, but the balance is tilting toward private knowledge as poisoning risks in the open web rise. Incidents logged since 2024 clustered around vector layer misconfigurations, indirect injection through retrieved text, cross-tenant contamination, and “zero-click” exfiltration paths. Key KPIs now include retrieval precision and recall, groundedness scores, leak and policy-block rates, and mean time to remediation. Investment is flowing toward retrieval authorization, provenance scoring, and evaluation platforms, with benchmarks emerging for entitlement coverage in indices, deletion SLAs across derived vectors, and encrypted-at-rest footprints.

Threat Model and Safeguards

RAG expands the attack surface because model outputs hinge on dynamic, sometimes untrusted content. Indirect injection rides along with retrieved context—malicious instructions buried in PDFs, tickets, or README files can hijack agent behavior. Knowledge base poisoning plants biased or false content upstream, which RAG then amplifies with borrowed credibility. The vector layer introduces sensitive data risks and the possibility of embedding inversion. In multi-tenant SaaS, weak isolation or misapplied filters can surface another customer’s data when semantic neighbors are not policy-filtered.

Practical safeguards follow the pipeline. Pre-embedding DLP and sanitization strip PII and secrets, tag records with tenant and entitlement metadata, and establish deletion lineage. Storage controls encrypt vectors, lock down management paths, and enforce least privilege. Retrieval-time ABAC or RBAC operates inside the query, filtering candidates before ranking so the model never sees out-of-policy chunks. Prompt isolation separates system rules, user intent, and context, while input classifiers look for jailbreak and injection motifs. Output scanning catches PII or sensitive disclosures and logs incidents for response workflows. Telemetry that tracks retrieval provenance, role or tenant mismatches, token spikes, and semantic drift feeds continuous evaluation to detect poisoning and misconfiguration.

Compliance and Governance

Regulations governing privacy, security, and sectoral obligations now directly shape RAG implementation. GDPR and CCPA drive minimization, right-to-be-forgotten, and purpose limitation, pushing teams to engineer deletion and auditability into chunking and embedding. HIPAA and PCI impose strict handling of PHI and card data, which in turn require redaction or exclusion policies at ingest. SOC 2, ISO 27001, ISO/IEC 23894, and the NIST AI RMF provide frameworks to map control objectives to RAG stages, clarifying how policies translate to technical enforcement.

Meeting these obligations hinges on metadata discipline. Entitlements, tenants, source systems, and lineage must be indexable attributes so that purges can cascade across derived artifacts. Data residency, encryption, and key management policies must extend to vector stores and model prompts. Vendor risk expands to include vector DBs, LLM APIs, and guardrail services, underscoring the shared responsibility model. Evidence of compliance is getting more granular: redaction rates, retrieval authorization test suites, groundedness reports, and policy enforcement logs are now standard artifacts in audits.

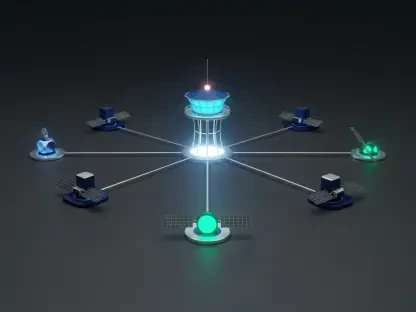

Platform Mapping: Google Cloud Controls

Enterprises building on Google Cloud are aligning controls with the RAG lifecycle. Sensitive Data Protection detects and redacts PII, secrets, and regulated fields before chunking, lowering exposure in embeddings. Vertex AI Vector Search pairs with IAM to enforce retrieval-time authorization and strong tenant isolation, embedding entitlements directly into query filters. Vertex AI Model Armor screens inputs for jailbreaks and indirect injection patterns and scans outputs for sensitive leakage or unsafe content.

Quality and safety are monitored through Vertex AI Evaluation, which tracks groundedness, faithfulness, and source alignment as part of continuous delivery. Security Command Center Enterprise, with AI Security Posture Management, inventories AI assets, flags misconfigurations such as overly permissive roles or exposed vector endpoints, and models exfiltration paths. Together these services streamline a zero-trust approach while providing audit-friendly telemetry.

Forecast and Strategic Guidance

From 2026 to 2028, spending is expected to tilt decisively toward retrieval authorization, provenance-aware ranking, and evaluation platforms that quantify safety. Attribute-aware vector search and hybrid retrieval that pre-filter by policy before similarity scoring will become table stakes. Signed provenance and trust scoring will influence ranking, and confidential computing with encrypted search will harden embeddings and queries. Policy LLMs and verifiable agents will mediate tool use and retrieval permissions, reducing dependence on brittle prompt logic.

Enterprises favor integrated, cloud-native security over patchwork point tools. Buyers are asking for measurable SLAs such as leak rate, cross-tenant incident rate, and deletion SLA coverage. Strategic guidance is coalescing around early metadata design—encoding tenant and entitlements at ingest, not as retrofits—plus automated red-team injections, poisoning drills, and fuzzing of retrieval authorization. Freshness will be balanced with trust by gating ingestion, using allowlists, and applying provenance-weighted retrieval for external sources.

Verdict and Next Steps

The evidence pointed to a clear answer: RAG could be made safe for SaaS when retrieval-time controls anchored a defense-in-depth architecture across ingestion, storage, and generation. Organizations that enforced entitlements in the vector query, minimized sensitive content before embedding, encrypted and isolated vector infrastructure, and separated prompts while screening inputs and outputs saw material reductions in leak rates and cross-tenant incidents. Continuous evaluation and posture management completed the loop, catching drift, poisoning, and misconfigurations before they reached customers.

Next steps translated into concrete moves. Teams prioritized retrieval authorization, metadata pipelines that preserved provenance and deletion lineage, and evaluation plus telemetry that measured groundedness and policy adherence. Posture automation—scanning for misconfigurations, exposed endpoints, and weak roles—reduced operational drag. Emerging capabilities, including attribute-aware search, signed provenance, and confidential retrieval, offered fresh levers to harden pipelines without sacrificing utility. The market’s direction favored cloud-native stacks capable of proving safety with evidence, not assurances, and the path to trusted RAG in SaaS ran through zero-trust principles applied at retrieval, with Google Cloud’s Sensitive Data Protection, Vertex AI Vector Search, Model Armor, Evaluation, and Security Command Center serving as practical building blocks.