The financial sector currently operates within a digital ecosystem where a single millisecond of latency or a minor logic error in a smart contract can trigger millions of dollars in losses across global markets. As institutions navigate this high-stakes environment, the traditional methods of verifying software integrity are proving to be fundamentally inadequate for the speed and scale of modern finance. The industry is witnessing a monumental transition where artificial intelligence is replacing the deterministic, human-authored scripts of the past with intelligent, adaptive systems capable of self-directed validation. This movement toward a Quality Intelligence model is not merely a matter of convenience; it is a necessary structural response to the sheer complexity of distributed financial networks that now process billions of transactions daily. By utilizing machine learning to analyze application behavior and predict potential failure points, these systems are ensuring that the digital foundations of the global economy remain resilient under the intense operational pressures that define the contemporary market landscape.

The Failure of Deterministic Logic: Moving Beyond Manual Scripts

For decades, the bedrock of software reliability was the deterministic test script, a rigid framework where engineers manually anticipated specific user paths and wrote code to check other code. This methodology operated under the assumption that an application existed in a relatively static state with a manageable number of predictable outcomes. However, the current era of cloud-native architectures and microservices has rendered this static model entirely obsolete. In a modern fintech environment, applications are subject to continuous integration and deployment cycles where code changes are pushed to production several times an hour. This rapid pace has created a maintenance trap for quality assurance teams, who frequently find themselves spending more time repairing broken test scripts than actually investigating potential vulnerabilities or developing new features for the platform.

The inherent limitation of human-authored scripts lies in the fact that they are restricted by the imagination and foresight of the individual tester. Conventional automation can only verify the specific conditions that a human has thought to define, leaving vast gaps in coverage regarding complex system interactions and rare edge cases. As financial platforms grow more interconnected, the number of possible system states has increased exponentially, making it mathematically impossible for a human team to script every potential scenario. This lack of coverage creates a dangerous illusion of security where a dashboard might show passing tests while a critical logic flaw remains hidden in an unmapped intersection of APIs and third-party services. The transition to AI-driven validation addresses this by moving away from pre-defined paths toward a system that explores the application dynamically, identifying patterns and anomalies that no human could reasonably anticipate.

Navigating the Complexity: Why Fintech Requires Specialized Intelligence

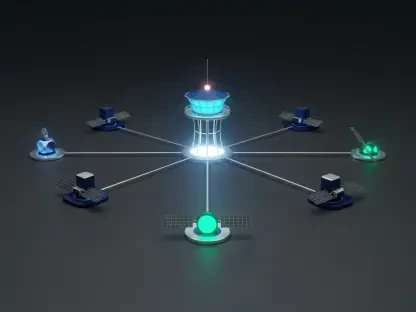

Software defects are problematic in any industry, but in the financial technology sector, the consequences of a failure are often catastrophic and irreversible. The cost of a bug in a payment gateway or a trading platform is not measured in minor user frustration but in systemic financial loss, regulatory penalties, and the permanent destruction of institutional trust. Financial platforms are defined by their intricate, multi-layered workflows where a single transaction triggers a cascade of events including fraud detection, currency conversion, interest calculations, and automated regulatory reporting. Each of these layers operates on its own logic, and the interactions between them frequently create emergent behaviors that are impossible to predict using simple pass-fail scripts. Traditional testing methodologies struggle to capture these multi-variable conditions, whereas artificial intelligence thrives on analyzing the high-dimensional data sets typical of these intricate transactions.

This complexity is further magnified by the global nature of modern finance, which requires software to handle diverse regulatory requirements and fluctuating market conditions in real-time. A bug might only manifest when a specific promotional offer expires exactly during a peak transaction window while a user has a pending dispute in a different currency. Capturing such a specific set of circumstances requires a level of data synthesis that far exceeds human capacity. Artificial intelligence models are specifically designed to ingest vast amounts of telemetry and historical data to identify these hidden correlations. By recognizing that certain modules are more prone to failure under specific environmental stressors, these intelligent systems can direct testing resources toward the highest-risk areas of the platform, ensuring that the most critical financial functions receive the highest level of scrutiny.

Integrating Intelligence: The Rise of the Proactive Quality Layer

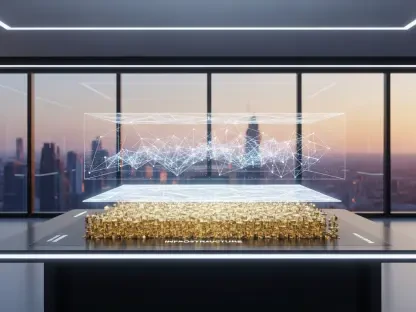

The core of the current technological transformation is the transition from passive tools to proactive, intelligent systems that observe the entire software ecosystem. Unlike traditional automation frameworks that wait for a command to execute a script, AI-driven quality layers function as a continuous intelligence stream. These systems ingest data from a variety of sources, including historical test results, application logs, and real-time user telemetry, to build a comprehensive map of system health. By analyzing how actual customers navigate a platform, the AI can identify discrepancies between the intended design and real-world usage, ensuring that the most frequently used paths are the most rigorously validated. This approach allows organizations to move away from the inefficient practice of testing everything and instead focus on testing what matters most to the business and its users.

Generative AI is playing a particularly vital role in this evolution by bridging the technical gap between business requirements and executable code. Large Language Models are now capable of taking a natural language description of a financial workflow and automatically generating hundreds of permutations and edge-case scenarios. For instance, a product manager can describe a new credit card dispute process, and the AI can instantly produce test cases covering partial payments, fluctuating exchange rates, and mid-cycle resolution attempts. This rapid automation ensures a level of rigor and coverage that would take a human team weeks to design and implement manually. By automating the creation of these scenarios, fintech companies can maintain their pace of innovation without sacrificing the stability required for financial operations, essentially allowing the testing process to scale at the same speed as the development itself.

Predictive Validation: Shifting Quality Control to the Design Phase

The industry consensus has moved firmly toward predictive testing, a methodology that utilizes historical data and machine learning to forecast where defects are likely to occur before a single line of test code is even executed. In the high-pressure fintech space, this capability allows developers to identify high-risk changes that resemble previous updates which caused systemic failures. By analyzing code commits and comparing them against past regression patterns, the AI can flag potentially dangerous modifications during the design phase. This shift-left approach to quality control is essential for preventing bugs from ever reaching a production environment, where they could cause immediate financial disruption or erode customer confidence in the platform’s reliability. Predictive models act as an early warning system, providing developers with actionable insights while the code is still being written.

We are currently witnessing the rise of truly autonomous quality engineering, a state where the system manages its own lifecycle with minimal human intervention. In this model, self-healing scripts are capable of automatically updating themselves when a user interface changes or an API is modified, eliminating the maintenance burden that has traditionally slowed down development teams. These autonomous systems are also adept at distinguishing between genuine system failures and environmental noise, such as network latency or temporary database timeouts. By filtering out these “flaky” results, the AI ensures that engineers only spend their time investigating real issues that impact the integrity of the financial system. This streamlining of the development pipeline allows for a more focused and efficient allocation of human resources, moving the focus from routine execution to high-level system architecture and security.

Redefining Expertise: The Strategist in the Autonomous Ecosystem

As artificial intelligence assumes responsibility for repetitive and data-heavy validation tasks, the role of the human quality engineer is being fundamentally redefined. Rather than spending their days writing and debugging scripts, these professionals are evolving into high-level strategists who define the intent of the system and interpret the deep insights provided by machine learning models. The human element remains critical for navigating challenges that machines cannot yet master, such as the ethical implications of AI-driven decision-making and the complex interpretation of global financial regulations. This transition allows human experts to apply their intelligence to more creative and impactful problems, such as optimizing the user experience strategy or designing more robust security architectures that can withstand increasingly sophisticated cyber threats in the financial sector.

The integration of artificial intelligence into software testing has provided a new and more robust foundation for trust in the digital financial world. By moving from a linear, reactive process to a continuous, circular loop of data analysis and risk prediction, organizations have successfully aligned their quality assurance efforts with the realities of modern finance. This transformation was not merely a technical upgrade but a vital survival mechanism for institutions operating in a hyper-connected global economy. Leaders in the sector have already begun implementing multi-year roadmaps to expand these capabilities, ensuring that as financial systems grow more complex, the ability to verify their accuracy and safety keeps pace. The industry successfully moved beyond the limitations of manual scripting, creating a future where software reliability is a dynamic, intelligent, and proactive component of the financial infrastructure.