The realization that computational power has become the new global currency is no longer a theoretical debate but a $1.8 billion reality reshaping the very foundations of the digital world. As frontier AI models grow in complexity, the underlying infrastructure must undergo a radical transformation to keep pace with the sheer volume of data processing required. The current state of the artificial intelligence sector is defined by a desperate hunger for high-density environments that can support massive clusters of specialized hardware. This has sparked a transition from general-purpose cloud services, which served the broader internet for decades, toward specialized environments tailored specifically for the needs of large-scale model training and inference.

While traditional hyperscalers still maintain a formidable presence, agile networking firms like Akamai are successfully positioning themselves as vital alternatives. This scramble for high-end GPU hardware is happening against a backdrop of power-constrained data centers, where the ability to secure electricity is just as critical as the silicon itself. The industry is witnessing a fundamental shift where the ability to provide massive, reliable compute at scale determines which infrastructure providers will thrive in this new era.

The Evolving Landscape of Frontier AI and Specialized Compute Demand

The artificial intelligence sector has reached a point where the standard cloud delivery model is no longer sufficient for the needs of frontier research. High-density infrastructure is now a prerequisite for any firm attempting to build or run state-of-the-art models, leading to a surge in demand for facilities that can handle extreme heat and power loads. This shift marks a clear departure from the flexible, general-purpose virtual machines of the past, moving instead toward physical environments that are custom-built for high-performance computing.

In this tightening market, the hierarchy of players is changing as specialized networking and edge computing companies enter the fray. The global scramble for NVIDIA architectures has created a bottleneck that forces AI firms to look beyond the big three cloud providers. Consequently, firms are prioritizing data centers that offer not just space, but the specific power and cooling capacities required to keep thousands of GPUs running at peak performance without interruption.

Redefining the Cloud Paradigm through Strategic Alliances

The Shift Toward Multi-Provider and Alternative Cloud Ecosystems

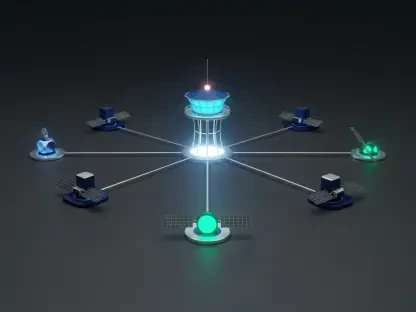

Frontier firms are increasingly diversifying their infrastructure to mitigate the risks associated with being tethered to a single cloud giant. This strategic pivot has allowed second-tier cloud providers to rise, transforming from traditional content delivery networks into high-scale AI compute hubs. By spreading their workloads across multiple environments, AI developers can ensure that a single provider’s hardware shortage or service outage does not paralyze their entire development pipeline.

This diversification is driven by an incredible surge in utilization, with some AI services reporting an 80x growth in demand over extremely short periods. Such rapid scaling requires a level of infrastructure insurance that only a decentralized, bespoke hardware arrangement can provide. As a result, the industry is moving toward a more fragmented yet resilient ecosystem where hardware is localized and optimized for specific tasks rather than being centralized in a few massive hubs.

Analyzing Economic Momentum and Scaling Projections

The $1.8 billion commitment from Anthropic serves as a powerful indicator of the current market valuation for high-performance infrastructure. This capital injection provides alternative providers with the necessary momentum to compete directly with established giants in the high-performance computing market. Market data suggests that these types of long-term contracts are becoming the new standard for securing capacity through the end of 2028, reflecting a belief that demand for compute will only continue to accelerate.

Forward-looking forecasts indicate that GPU capacity requirements will reach unprecedented levels as training and inference workloads scale up. The infrastructure market is projected to grow significantly as firms rush to fulfill the requirements of the next generation of models. For alternative cloud providers, this represents a unique window to capture market share by offering the specialized, high-performance clusters that traditional providers are struggling to supply fast enough.

Navigating the High-Stakes Complexity of GPU Scaling

Building and maintaining clusters consisting of tens of thousands of GPUs involves staggering capital expenditure that tests the balance sheets of even the wealthiest firms. Beyond the initial purchase of hardware, the technical obstacles of retrofitting older data centers are immense, as advanced architectures require specialized liquid cooling and significantly more power than standard racks. These requirements create a high barrier to entry, ensuring that only those with deep pockets and technical expertise can compete.

Managing the risks associated with revenue concentration is another critical factor, as infrastructure providers become increasingly dependent on a small number of massive AI clients. The long-term implications of hardware procurement cycles mean that firms must place multi-billion dollar bets on technology that may be superseded within a few years. Overcoming these bottlenecks requires a blend of financial engineering and technical innovation to ensure that scalability remains seamless during periods of rapid user adoption.

The Regulatory and Operational Framework of Modern AI Infrastructure

The regulatory environment surrounding high-density data centers is becoming increasingly complex as governments scrutinize energy consumption and international hardware trade. Standards for data security and compliance are evolving to protect proprietary frontier models within shared hosting environments, where the stakes for intellectual property theft are incredibly high. These legal frameworks are designed to balance the need for innovation with the necessity of protecting national interests and environmental resources.

Moreover, there is a growing push for regulations that prevent vendor lock-in, encouraging interoperability across various cloud stacks. This regulatory pressure aligns with the strategic goals of AI firms that wish to remain flexible in their infrastructure choices. Simultaneously, shifting energy laws are influencing where new facilities are built, favoring regions that can provide stable, green energy to power the massive cooling systems required by modern AI hardware.

Future Trajectories for the AI-Specialized Data Center

The next generation of data center design will likely focus on liquid cooling and dedicated AI networking to handle the massive data throughput required by modern models. Market disruptors are also looking toward edge AI and specialized hardware accelerators to reduce the reliance on central GPU clusters. These innovations could potentially decentralize the power of the current cloud giants, allowing for more localized and efficient processing of AI tasks.

Global economic conditions will continue to influence the feasibility of long-duration, multi-billion dollar infrastructure contracts. While the current trend favors massive GPU fleets, future innovations in model efficiency could reshape the long-term demand for such vast amounts of hardware. If researchers find ways to achieve similar performance with less compute, the infrastructure landscape may shift again, favoring agility and efficiency over raw, brute-force power.

Assessing the Long-Term Viability of the Anthropic-Akamai Blueprint

The strategic partnership between Anthropic and Akamai demonstrated a shift in how frontier AI companies secured the necessary computational resources for growth. By committing billions of dollars over a multi-year period, these organizations established a new blueprint for infrastructure diversification that challenged the traditional dominance of hyperscale cloud providers. This arrangement provided a necessary hedge against hardware scarcity and ensured that scaling remained a priority even amid intense market competition.

The deal functioned as a repeatable model for the broader industry, proving that alternative providers could successfully pivot their business models to meet the specialized demands of AI. Stakeholders who navigated the intersection of cloud networking and artificial intelligence found that decentralized, bespoke arrangements offered greater flexibility and risk mitigation. This evolution ultimately suggested that the future of AI infrastructure would be defined by specialized partnerships rather than a reliance on a single, monolithic provider.