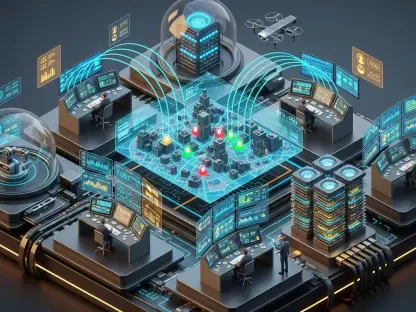

The rapid convergence of computer vision and cognitive reasoning is transforming how digital ecosystems operate by shifting the paradigm from static software tools toward active, autonomous participants. This evolution marks a decisive departure from traditional Large Language Models that merely process text, ushering in the era of Large Action Models capable of navigating complex user interfaces. Anthropic has signaled its commitment to this frontier through the strategic acquisition of Vercept, a specialized team dedicated to bridging the gap between digital perception and executable action. By integrating this expertise, the goal is to refine Claude into a system that does not just suggest work but performs it within the native environments of professional software.

Mapping the Evolution of Agentic AI and Vision-Based Automation

The current landscape of artificial intelligence is moving beyond the chat interface and into the realm of direct operating system interaction. This transition represents the next major frontier for productivity software, where the ability to use a computer like a human becomes the primary metric of utility. While early automation relied on Robotic Process Automation, which required rigid scripts and predefined paths, the new wave of agentic systems utilizes flexible, vision-driven logic. This shift allows AI to adapt to updates in software layouts without breaking, a capability that was previously impossible under the old RPA framework.

The competitive race between industry leaders like Anthropic, OpenAI, and Google DeepMind has intensified as they each seek to build the most reliable digital assistant. Success in this sector depends on how well a model can interpret a screen in real time and translate that visual data into precise mouse movements and keystrokes. As organizations move away from brittle API dependencies, the demand for models that can interact with any legacy or modern application through visual intelligence has become a central focus of market growth.

Catalyzing Growth Through Advanced Perception and High-Performance Benchmarks

The Strategic Transition: From Static Scripting to Dynamic Visual Intelligence

Vercept’s core innovation lies in its Vy technology, a system designed to perceive digital interfaces with the same nuance and contextual awareness as a human user. Unlike traditional tools that look for specific code tags or metadata, this technology interprets the actual pixels on a screen to identify buttons, fields, and navigation menus. This visual-first approach allows for a more natural language-based command execution, where a user can provide a high-level instruction and the AI determines the necessary steps to complete it across multiple applications.

The leadership team brought into Anthropic from Vercept carries deep expertise in robotics and perception, which is vital for grounding Claude’s reasoning in physical-like digital spaces. This influx of talent ensures that the model can understand spatial relationships on a desktop, such as the difference between a background window and an active dialogue box. This foundational knowledge is expected to accelerate the development of more intuitive agentic workflows that feel seamless to the end user.

Quantifying the Leap: Efficiency and Performance Metrics

The technical superiority of vision-based systems is clearly reflected in recent automation benchmarks, where Vercept achieved a staggering 92% accuracy rate. This performance stands in sharp contrast to earlier industry standards that often struggled to reach even 20% on complex, multi-step tasks. For enterprises, these gains translate directly into an estimated 30% reduction in operational costs, as the need for manual oversight and script maintenance vanishes.

Moreover, the fivefold increase in performance speed allows for high-velocity data processing and workflow execution that outpaces human capability. Market projections suggest that agentic systems capable of complete workflow autonomy will see rapid adoption as companies seek to maximize the efficiency of their existing software stacks. The ability to handle intricate spreadsheets and web-based environments with human-level precision is no longer a theoretical goal but a measurable reality.

Overcoming the Technical Hurdles of Real-Time Interface Interaction

Operating within a live digital environment presents significant challenges, particularly regarding the latency of screen processing and the unpredictability of dynamic web elements. AI agents must be able to process continuous visual streams while making split-second decisions, a task that requires immense computational overhead. Navigating non-standardized layouts and legacy software requires a level of reasoning that can distinguish between a deliberate UI change and a temporary loading error.

To mitigate these issues, strategies are being developed to optimize how models interpret high-latency environments without sacrificing accuracy. This involves creating more efficient ways to sample screen data and prioritize critical interface components over background noise. As these systems become more adept at managing real-time decision-making, the friction between the AI and the operating system will continue to decrease, leading to smoother interactions.

Navigating the Ethical and Governance Frameworks of Autonomous Desktop Operation

As AI agents gain deeper access to operating systems, the privacy implications of systems that monitor and interact with live user screens become a primary concern. The ability of a model to see everything a user sees necessitates robust security standards to prevent unauthorized software actions or data leaks. Establishing clear compliance frameworks is essential for ensuring that these autonomous systems operate within the boundaries of organizational policy and legal requirements.

Security protocols must be standardized to protect sensitive information while still allowing the AI the access it needs to be productive. This involves creating “human-in-the-loop” safeguards where critical or high-risk actions require explicit user approval. By building these safety measures into the core architecture of agentic AI, developers can foster trust among enterprises that are hesitant to grant autonomous models full control over their digital environments.

Forecasting the Future of Software Interaction and Human-AI Workflows

The move toward seamless, multi-application autonomy will likely redefine the professional environment, turning software tools into collaborative digital assistants. Instead of users spending hours on repetitive data entry or cross-platform coordination, they will focus on high-level strategy while the AI handles the mechanical execution. This transition will likely disrupt the current software market, favoring platforms that are designed to be “agent-friendly” and easily navigable by vision-based systems.

Innovations in visual perception will eventually lead to a shift where the operating system itself becomes a secondary layer, hidden behind a unified AI interface. Global workforce productivity is expected to surge as the barriers between different software ecosystems are dismantled by intelligent agents. This evolution will not only change how individuals work but will also alter the economic output of industries that rely heavily on digital administration and complex data management.

Synthesizing the Strategic Impact of Vercept on Anthropic’s Competitive Roadmap

The integration of Vercept’s vision technology with Claude’s advanced reasoning capabilities provided a clear path toward market leadership in the agentic AI sector. Anthropic successfully moved beyond text-based interaction by securing a team that specialized in the nuances of human-computer interaction. This strategic alignment allowed for the development of a model that navigated digital landscapes with unprecedented reliability. Investors and enterprises were encouraged to look toward these autonomous systems as the next logical step in software evolution, focusing on models that reduced human intervention. The transition toward a vision-centric approach solidified the foundation for a future where digital agents manage the complexities of modern work effortlessly. By prioritizing the intersection of perception and action, the roadmap for autonomous software became a reality that redefined global productivity standards.