Vijay Raina, a leading specialist in enterprise SaaS and software architecture, brings a wealth of experience in helping organizations navigate the complexities of digital transformation. As a thought leader in software design, he focuses on how emerging technologies like generative AI and automated agents can be seamlessly woven into the fabric of daily work to drive productivity. In this discussion, we explore the shift toward visual data storytelling and the rise of integrated AI agents within the modern enterprise ecosystem.

When teams transition from raw data storage to visual reporting, how does performing this work inside a central collaboration tool change the creative process? What specific workflows benefit most from automated visual formats like charts or graphics, and what are the primary friction points this removes for non-designers?

Integrating visual tools like Remix directly into a workspace like Confluence fundamentally shifts the creative process from a linear, multi-app struggle to a fluid, circular workflow. Traditionally, a non-designer had to export data, open a separate visualization tool, and manually tweak settings, which often led to a “context switch” that killed momentum. By automating format recommendations, the software removes the paralysis of choice, allowing a user to see a chart or graphic materialize instantly from their text. This is particularly transformative for product managers and team leads who need to turn dense status reports into digestible summaries for stakeholders. It eliminates the friction of manual data mapping, ensuring that the creative energy is spent on interpreting the story the data tells rather than fighting with the pixels of a bar graph.

Integrating external agents to build prototypes or starter apps directly from technical documents is a significant shift in product development. How should product managers evaluate which data sets are ready for this level of automation, and what steps ensure these AI-generated prototypes align with the original project goals?

Product managers must look for high-fidelity technical documents and clear product ideas within their source of truth to determine readiness for automation. When tools like Lovable or Replit are plugged into the environment, they rely on the Model Context Protocol to understand the depth of the data provided. A “ready” data set is one that is structured enough for an agent to interpret logic flows and user requirements without ambiguity. To ensure alignment, managers should use these agents to create “starter apps” as a baseline, then iteratively refine the prompts based on the project’s original goals. This approach turns a static page into a dynamic starting point, where the prototype serves as a functional mirror of the documentation, reducing the gap between a written concept and a working piece of software.

Converting internal project notes into external-facing presentations often requires hours of manual editing. What are the best practices for using AI agents to maintain a consistent narrative across slides, and how can teams effectively measure the time saved compared to traditional deck-building methods?

The best practice is to treat a single source-of-truth document as the anchor for the entire narrative, allowing an agent like Gamma to pull context directly from comprehensive project notes. By keeping the AI within the same workspace, the agent maintains the nuances of the team’s language and goals, which prevents the narrative drift that often happens when copying and pasting into standalone slide software. Teams can measure efficiency by tracking the “time-to-first-draft,” which in many cases drops from several hours to just a few minutes of automated generation. Beyond just raw hours, the value is found in the reduction of “busy work,” letting teams focus on the high-level walkthrough for customers rather than the aesthetic formatting of individual slides.

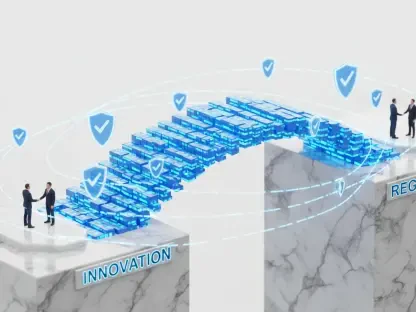

There is a growing movement to embed AI capabilities into existing enterprise software rather than launching standalone platforms. How does this integration affect employee adoption rates, and what challenges do companies face when managing multiple third-party agents within a single workspace ecosystem?

Embedding AI directly into existing workflows significantly boosts adoption rates because it meets employees where they already work, fulfilling the vision that technology should fade into the background. When a tool is added to Jira or Confluence, there is no new login to remember or interface to learn, which lowers the barrier to entry for the average worker. However, the challenge lies in managing the “ecosystem noise” that comes with multiple third-party agents operating in one space. Companies must ensure that these agents—whether they are from OpenAI’s Frontier Alliances or specialized builders like Replit—don’t create conflicting outputs or data silos. The goal is a unified experience where different agents collaborate under a single management layer, similar to how Salesforce or Atlassian are currently structuring their agentic platforms.

What is your forecast for the future of AI-integrated collaboration tools?

I predict that the “document” as we know it will evolve into a living, executable entity that serves as a launchpad for every stage of the business lifecycle. We will see a shift where the software doesn’t just store information but actively anticipates the next step, automatically generating the code, the visual assets, and the client presentations the moment a project note is finalized. Integration will become so deep that the distinction between a “collaboration tool” and an “app builder” will vanish entirely. Ultimately, this will lead to a “vibe-coding” era for the enterprise, where the speed of innovation is limited only by the clarity of a team’s ideas, not the technical hurdles of their software stack.