The speculative gold rush that once defined the artificial intelligence sector has finally met its match in a market demanding tangible architectural depth over aesthetic polish. For several seasons, the landscape was flooded with software that functioned primarily as a cosmetic layer over third-party large language models. These entities capitalized on the novelty of generative responses, but the market has rapidly transitioned from a period of experimental frenzy to one of disciplined scrutiny. Capital is no longer flowing toward any startup with an API connection; instead, it is seeking out the few that provide genuine utility and long-term structural value.

A thin wrapper refers to a business model that relies almost exclusively on the intelligence of an external foundation model without adding significant proprietary logic or data. Venture capitalists have recognized that such companies lack a sustainable competitive advantage, as their core functionality can be replicated by a competitor or the model provider itself in a matter of days. This realization has sparked a flight to quality, forcing a redefinition of funding criteria. Investors now prioritize vertical depth and proprietary defensibility, looking for firms that do more than just relay prompts and display answers.

The shift is most visible in how market players have moved away from horizontal breadth. In the earlier stages of the AI boom, software attempted to be everything to everyone, but those generalized tools are now being undercut by more specialized alternatives. The focus has turned toward companies that embed AI within complex, industry-specific workflows where general-purpose models struggle to compete without significant external context.

The Death of Novelty and the Rise of Architectural Resilience

The Erosion of UI-Centric Moats: The “Cloneware” Crisis

For years, a sleek user interface was considered a primary differentiator in the software-as-a-service world, yet in the current climate, a polished UI is no longer a sustainable moat. The proliferation of AI-assisted coding tools has significantly lowered the barriers to entry, making it possible for small teams to replicate complex traditional codebases with unprecedented speed. This has led to a cloneware crisis where dozens of startups offer identical features, essentially commoditizing the visual and functional layers of the software stack.

Furthermore, investors are increasingly rejecting products that rely on human stickiness or manual engagement metrics. The traditional goal of keeping a user on a platform for as long as possible is being replaced by the demand for automated, agentic outcomes. A product that requires a human to constantly prompt and supervise it is viewed as a liability rather than an asset. The new gold standard is software that works autonomously in the background, delivering a finished result rather than just a helpful suggestion.

Quantifying the Shift: Market Data and the New Economic Reality

The financial performance of thin startups has plummeted compared to their deep, AI-native counterparts. Recent market data indicates a sharp valuation collapse for firms that failed to move beyond the wrapper stage, while those with proprietary infrastructure continue to command premiums. This divergence highlights a new economic reality where the cost of doing business is scrutinized more than ever. The transition from per-seat pricing to usage-based models is a direct response to this, as it aligns the cost of the software with the actual value it creates for the enterprise.

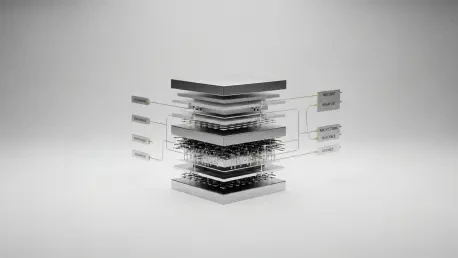

Architectural efficiency has also become a critical factor in determining gross margin health. The inference tax, or the recurring cost of querying a foundation model, can quickly erode the profits of a business that lacks its own optimized infrastructure. Investors are now favoring firms that demonstrate the ability to route tasks to smaller, more efficient models or utilize intelligent caching to reduce dependency on expensive third-party APIs. Those who cannot manage these margins are finding it nearly impossible to secure follow-on funding.

Strategic Obstacles in a Commoditized AI Environment

The challenge of integration evaporation has fundamentally changed how software interacts with other tools. As standardized protocols like the Model Context Protocol and various assistant APIs become the norm, the ability to connect different software systems has become a utility rather than a unique selling point. Startups that once built their entire value proposition on being a connector are finding their moats dried up as foundation model providers build these integrations directly into their core offerings.

Navigating the system of record trap requires moving beyond simple copilots that provide advice to agents that can execute tasks. Many early AI tools failed because they remained secondary to the primary software where work actually happened. To survive, a company must become the primary destination for a specific business process, owning the entire workflow from start to finish. This requires moving away from superficial logic and toward specialized fine-tuning that reflects the nuances of a specific industry or department.

Governance, Compliance, and the Data Moat Mandate

Proprietary data access has emerged as the most reliable defense against commoditization, particularly in heavily regulated sectors. In industries such as healthcare or finance, a general-purpose model is often insufficient due to privacy concerns and the need for high-precision accuracy. Companies that have secured privileged access to unique datasets or have established loops where their software improves with every customer interaction are the ones currently winning investor confidence.

Furthermore, the shift toward deploying AI within a customer’s Virtual Private Cloud has become a non-negotiable requirement for enterprise-grade adoption. Organizations are no longer willing to send their sensitive information to a third-party server without strict governance and auditability. Startups that built their architecture around these security standards from the beginning have a massive advantage over those trying to retrofit compliance into a thin, cloud-based wrapper.

The Future of AI Venture Capital: Verticalization and Agentic Orchestration

The emerging trend of vertical AI suggests that the next generation of winners will be those that own the entire job to be done for a specific niche. Rather than providing a tool for writing emails, these companies provide a platform for managing the entire customer relationship within a specific field like renewable energy or autonomous logistics. This vertical focus allows for much deeper integration and the creation of specialized agents that understand the unique jargon and regulatory requirements of that field.

Model-agnostic resilience is also becoming a key trait for durable enterprises. The ability to swap foundation providers to optimize for cost, speed, or accuracy ensures that a company is not tied to the fate of a single model developer. This flexibility, combined with a focus on measurable ROI over simple engagement metrics, is what defines the current investment thesis. The market is now looking for autonomous industrial maintenance platforms and specialized telemetry tools that offer clear financial benefits to the end user.

Synthesis: Building Substance in an Era of AI Abundance

The structural shift in venture capital toward deep workflow integration and proprietary context marked a necessary maturation of the industry. Investors moved away from the superficial allure of generative demos and demanded businesses that offered durable value and architectural integrity. This transition favored founders who prioritized mission-critical systems over simple UI wrappers, ensuring that the next wave of enterprise software was built on a foundation of substance.

The era of cloneware effectively ended as the market realized that connectivity and basic model access had become standardized utilities. Actionable next steps for the industry involved a deeper focus on data sovereignty and the development of agentic systems that could operate independently of constant human intervention. The healthy evolution of the global AI economy was ultimately secured by these rigorous standards, which separated the experimental novelties from the essential infrastructure of the future. By moving toward value-aligned pricing and vertical specialization, the sector established a more stable and predictable path for growth.